From Signal to Insight: A Complete Guide to EGM Processing for Machine Learning in Cardiac Electrophysiology Research

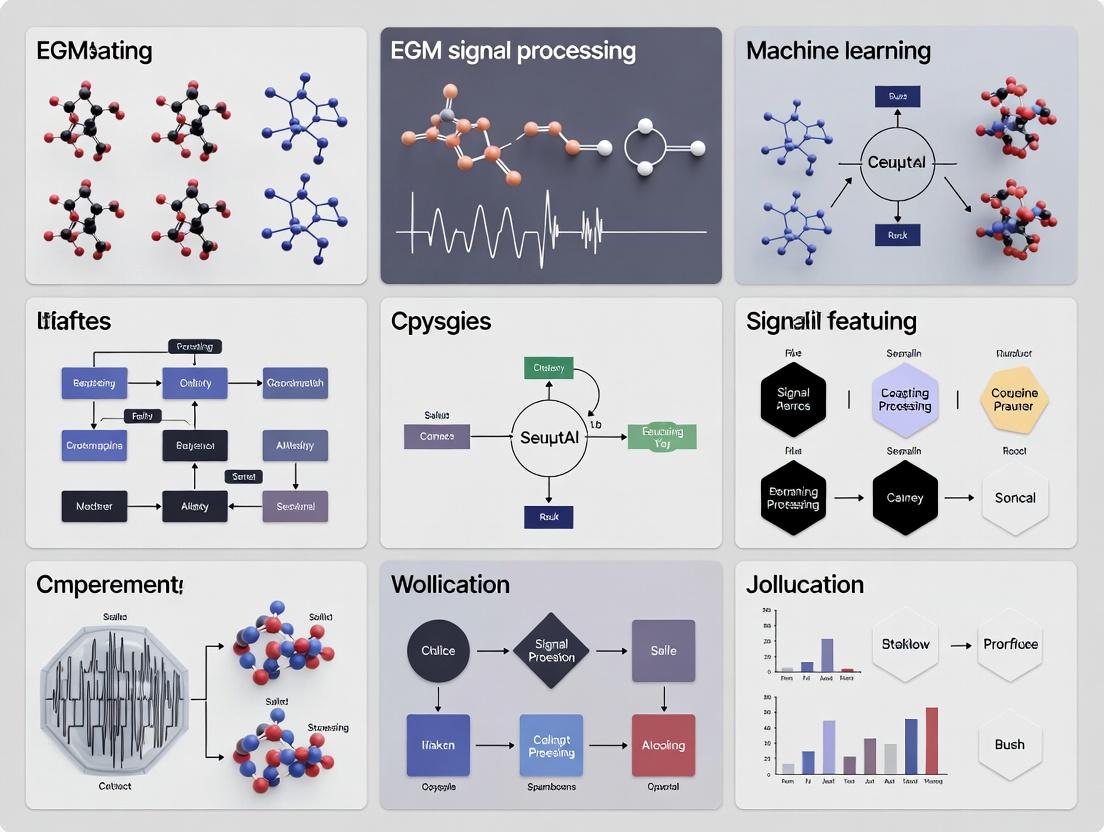

This article provides a comprehensive guide for researchers and drug development professionals on processing intracardiac Electrogram (EGM) signals for machine learning feature extraction.

From Signal to Insight: A Complete Guide to EGM Processing for Machine Learning in Cardiac Electrophysiology Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on processing intracardiac Electrogram (EGM) signals for machine learning feature extraction. It covers foundational concepts of EGM biophysics and noise, details preprocessing pipelines (filtering, segmentation, artifact removal) and feature engineering methods (time-domain, frequency-domain, non-linear). The guide addresses common challenges in signal quality and dataset imbalance, and establishes robust validation frameworks for comparing traditional biomarkers against ML-derived features. The goal is to equip scientists with the practical knowledge to build reliable, clinically translatable ML models for arrhythmia study and drug efficacy assessment.

Understanding the Raw Material: The Biophysics, Noise, and Components of Intracardiac EGMs

What is an EGM? Defining Intracardiac vs. Surface ECG Signals and Their Unique Information Content

An Electrogram (EGM) is a recording of the heart's electrical activity measured directly from the heart's surface or from within its chambers. This contrasts with a surface Electrocardiogram (ECG), which measures the same bioelectrical phenomena from electrodes placed on the skin. The proximity of EGM electrodes to the cardiac tissue provides a high-fidelity, localized signal with distinct information content compared to the spatially and temporally integrated view of the ECG.

Comparative Signal Characteristics

The fundamental differences between intracardiac EGM and surface ECG signals are summarized in the table below.

Table 1: Key Characteristics of Surface ECG vs. Intracardiac EGM

| Parameter | Surface ECG | Intracardiac EGM |

|---|---|---|

| Electrode Location | Skin surface (limbs, chest) | Endocardial/Epicardial surface, within chambers |

| Signal Amplitude | 0.5 - 5 mV | 5 - 20 mV (often higher) |

| Frequency Bandwidth | 0.05 - 150 Hz (diagnostic) | 1 - 500+ Hz (up to 1kHz for research) |

| Spatial Resolution | Low (whole-heart summation) | High (localized, < 1 cm² area) |

| Primary Information | Global cardiac rhythm, conduction pathways, gross morphology | Local activation timing, fractionated potentials, depolarization/repolarization details |

| Key Components | P wave, QRS complex, T wave | Local activation potential, far-field components, stimulus artifacts |

| Dominant Noise Sources | Motion artifact, muscle EMG, powerline interference | Electrode-tissue interface noise, instrumentation noise |

Unique Information Content and Physiological Basis

The information derived from each modality serves complementary purposes:

- Surface ECG: Represents the summed vector of all cardiac depolarization and reparization waves as they propagate through the volume conductor of the body. It is the gold standard for diagnosing arrhythmias (e.g., atrial fibrillation, ventricular tachycardia), conduction disorders (e.g., AV block), and ischemia.

- Intracardiac EGM: Provides a direct measurement of local myocardial activation. Key features include:

- Activation Timing: Precise local activation time (LAT) for mapping.

- Fractionated Potentials: Low-amplitude, high-frequency signals indicative of scarred or diseased tissue, critical for substrate-based ablation.

- Voltage: Amplitude correlates with local tissue health (e.g., scar voltage < 0.5 mV).

- Stimulus-Response: Direct capture and pacing threshold measurements.

Experimental Protocols for EGM/ECG Data Acquisition in Research

Protocol 1: Simultaneous Acquisition of Surface ECG and Intracardiac EGM in Preclinical Models

- Objective: To correlate global cardiac electrical activity (ECG) with local myocardial electrophysiology (EGM) for feature validation.

- Materials: See "The Scientist's Toolkit" below.

- Methodology:

- Anesthetize and instrument the animal model (e.g., porcine, canine) according to IACUC-approved protocols.

- Place standard limb lead ECG electrodes on shaved skin.

- Under fluoroscopic or electroanatomical mapping guidance, advance a diagnostic electrophysiology catheter (e.g., duodecapolar, or mapping catheter) to the target chamber (e.g., right atrium, left ventricle).

- Connect both ECG surface electrodes and intracardiac catheter to a multi-channel bio-amplifier/recording system with a sampling rate ≥ 2 kHz per channel.

- Record a minimum of 5 minutes of baseline rhythm. Induce arrhythmia if required by the protocol (e.g., via programmed electrical stimulation).

- Synchronize all data streams using a common analog or digital trigger.

- Apply band-pass filtering post-acquisition (ECG: 0.5-150 Hz; EGM: 1-500 Hz).

- Annotate key fiducial points (ECG: P onset, R peak; EGM: local activation peak/dV/dt max) for temporal analysis.

Protocol 2: Processing EGM Signals for Machine Learning Feature Extraction

- Objective: To generate a curated dataset of EGM features for arrhythmia classification or outcome prediction models.

- Workflow: The following diagram outlines the core signal processing and feature engineering pipeline.

Diagram Title: EGM Feature Extraction Pipeline for ML

- Detailed Steps:

- Pre-Processing: For each EGM channel, apply a 2nd-order 50/60 Hz notch filter, followed by a band-pass filter (e.g., 1-300 Hz Butterworth). Normalize amplitude (zero-mean, unit variance).

- Activation Detection: Use a validated algorithm (e.g., steepest negative dV/dt, wavelet transform) to mark the local activation time (LAT) for each beat.

- Beat Segmentation: Extract a window of data (e.g., 200 ms) centered on each detected LAT to create individual beat epochs. Reject epochs with excessive noise.

- Feature Extraction:

- Time-Domain: Peak-to-peak amplitude, slew rate (max dV/dt), duration at 50% amplitude, root mean square (RMS).

- Frequency-Domain: Dominant frequency, peak power spectral density, spectral entropy.

- Non-Linear: Wavelet entropy, fractal dimension, Lyapunov exponent (for sequential beats).

- Dataset Curation: Tabulate features with labels (e.g., sinus rhythm, scar zone, arrhythmia type) into a structured array (e.g., .csv, .h5) for ML model input.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for EGM/ECG Research

| Item | Function & Application |

|---|---|

| High-Density Mapping Catheter (e.g., PentaRay, HD Grid) | Provides simultaneous, spatially precise EGM recordings from multiple electrodes (e.g., 20-64 poles) for creating detailed activation maps. |

| Programmed Electrical Stimulator | Delivers precise pacing protocols (S1-S2, burst pacing) to induce and study arrhythmias in controlled experimental settings. |

| Multi-Channel Bioamplifier/Data Acquisition System (e.g., from ADInstruments, BIOPAC) | Amplifies, filters, and digitizes low-amplitude biological signals from both surface and intracardiac electrodes simultaneously. |

| 3D Electroanatomical Mapping System (e.g., CARTO, EnSite) | Integrates EGM location, timing, and voltage with 3D geometry to create maps of cardiac electrical activity. Essential for translating local EGM data to structural context. |

| Signal Processing Software (e.g., LabChart, MATLAB with Signal Processing Toolbox, custom Python scripts) | Performs critical offline analysis: filtering, annotation, feature extraction, and statistical analysis of acquired EGM/ECG data. |

| Langendorff Perfused Heart Setup | Ex vivo model allowing for controlled, motion-stable acquisition of high-fidelity epicardial and endocardial EGMs without systemic confounding factors. |

This application note details experimental protocols for investigating the biophysical basis of intracardiac electrogram (EGM) components. The work is framed within a broader thesis on developing interpretable machine learning features for cardiac electrophysiology. The core objective is to establish a causal, quantitative mapping between measurable tissue properties (e.g., conduction velocity, fibrosis density, ion channel function) and the morphological characteristics of EGM signals (far-field vs. near-field, unipolar vs. bipolar). This foundational mapping is essential for creating biologically grounded feature sets for ML models in arrhythmia research and drug development.

EGM Component Definitions and Determinants

| EGM Component | Definition | Primary Biophysical Determinants | Typical Frequency Range | Spatial Sensitivity |

|---|---|---|---|---|

| Near-Field | Signal from myocytes within ~1-2 mm of electrode. | Local transmembrane action potential (TAP) morphology, local coupling resistance, direct tissue-electrode contact. | 40-250 Hz | Highly localized (~1-2 mm radius). |

| Far-Field | Signal from myocardium remote (>1 cm) from electrode. | Global cardiac electrical propagation, tissue mass, tissue anisotropy, chamber geometry. | 1-40 Hz | Broad, whole-chamber or cross-chamber. |

| Unipolar | Potential difference between intracardiac electrode and distant reference. | Summation of all electrical activity (near-field + far-field) along the path to the reference. TIP: Broad spatial view. | 0.5-250 Hz | Very broad, omnidirectional. |

| Bipolar | Potential difference between two closely spaced intracardiac electrodes. | Spatial gradient of electrical potential. Emphasizes high-frequency components near the electrode pair. TIP: Localizes signal source. | 30-500 Hz | Directional, localized to inter-electrode axis. |

Quantitative Relationships: Tissue Properties to EGM Features

Table summarizing key quantitative mappings derived from experimental and simulation studies.

| Tissue Property | Measured Metric | Primary EGM Impact | Quantifiable Effect on EGM | Approximate Scaling Law (from models) |

|---|---|---|---|---|

| Conduction Velocity (CV) | cm/ms | Bipolar EGM width, slew rate (dV/dt). | CV ↓ → Bipolar width ↑, amplitude ↓, fractionation ↑. | Bipolar Width ∝ 1 / CV (local). |

| Fibrosis Density | % area or collagen volume fraction (CVF). | Near-field amplitude, bipolar fractionation, late potentials. | CVF > 10-15% → consistent fractionation, amplitude reduction > 50%. | Signal Amplitude ∝ exp(-k * CVF). |

| Tissue Mass / Wall Thickness | mm or g | Far-field amplitude in unipolar signals. | Mass ↑ → Far-field amplitude ↑ linearly in unipolar EGMs. | Unipolar FF Amplitude ∝ Mass (remote). |

| Ion Channel Dysfunction (e.g., INa) | Maximal dV/dt of TAP | Bipolar EGM slew rate, near-field amplitude. | dV/dtmax ↓ 50% → Bipolar slew rate ↓ ~40%, amplitude ↓ ~30%. | Slew Rate ∝ dV/dtmax. |

| Electrode-Tissue Distance | mm | Near-field amplitude, high-frequency content. | Distance ↑ 1mm → Bipolar amplitude ↓ ~50%, high-freq. power ↓ sharply. | Amplitude ∝ 1 / Distance² (near-field). |

Experimental Protocols

Protocol: Ex Vivo Mapping of Focal Fibrosis to Bipolar EGM Fractionation

Objective: To empirically correlate spatially registered histology (fibrosis quantification) with high-density bipolar EGM recordings.

Materials: Langendorff-perfused explanted heart (small animal or human), optical mapping system (optional), micro-electrode array (MEA) or multipolar catheter, perfusion system, rapid tissue freezer, histology setup (fixation, embedding, picrosirius red stain), confocal/standard microscope, co-registration software.

Methodology:

- Heart Preparation & Perfusion: Establish Langendorff perfusion with oxygenated Tyrode's solution. Maintain temperature (37°C), pH (7.4), and perfusion pressure.

- High-Density Electrophysiological Mapping:

- Position a high-density MEA (e.g., 128 electrodes, 0.5-1.0 mm spacing) on the epicardial region of interest (ROI).

- Record bipolar EGMs from all adjacent electrode pairs during steady-state pacing (cycle length 400-600ms).

- For each bipolar EGM, extract features: Number of Peaks (fractionation index), Peak-to-Peak Amplitude, Duration (total activation time), and Slew Rate.

- Create spatial maps of each EGM feature.

- Tissue Registration & Freezing:

- Mark the MEA boundaries on the epicardium with sterile dye pins.

- Rapidly excise the mapped ROI and freeze in optimal cutting temperature (OCT) compound using isopentane cooled by liquid nitrogen.

- Histological Processing & Co-Registration:

- Serially section tissue (5-10 µm thickness) perpendicular to epicardium.

- Stain with picrosirius red for collagen quantification.

- Image sections under polarized light (collagen appears birefringent) to compute Collagen Volume Fraction (CVF) per microscopic field (e.g., 200x200 µm).

- Using the dye marks and blood vessel patterns, digitally co-register each histological field with its corresponding EGM recording site from the MEA map.

- Statistical Correlation: Perform linear/multivariate regression analysis between local CVF and bipolar EGM features (e.g., Number of Peaks, Amplitude).

Protocol: In Silico Study of Ion Channel Block on Unipolar vs. Bipolar EGMs

Objective: To isolate the effect of specific ionic current reduction (simulating drug effect) on EGM component morphology using a computational model.

Materials: Multi-scale computational modeling software (e.g., OpenCARP, COMSOL, custom Matlab/Python with CellML). Models: Human ventricular myocyte model (e.g., O'Hara-Rudy, Tomek-Rodriguez), 2D or 3D monodomain/bidomain tissue slab model with realistic fibrosis patterns, virtual electrode arrays.

Methodology:

- Baseline Model Construction:

- Implement a 2D tissue sheet (e.g., 5x5 cm) with assigned fiber orientation.

- Incorporate a zone of diffuse fibrosis (15-30% CVF) using a fibroblast coupling model or by altering conductivity.

- Define virtual electrode locations: one unipolar (with distant reference) and one bipolar pair (2mm spacing) placed centrally.

- Simulation of Propagation & EGMs:

- Stimulate at one edge to generate planar wave propagation across the sheet.

- Solve the monodomain/bidomain equations to compute extracellular potentials at each electrode.

- Extract Baseline Unipolar EGM (showing near-field and far-field components) and Baseline Bipolar EGM (subtraction of two nearby unipolars).

- Intervention - Ion Channel Block:

- In the cell model, reduce the maximum conductance (gmax) of a target current (e.g., INa by 50%, ICa by 30%, IKr by 90%).

- Re-run the simulation with identical pacing.

- Extract Post-Block Unipolar and Bipolar EGMs.

- Feature Extraction & Comparison:

- For Unipolar: Measure Far-field amplitude (early/low-freq component), Near-field amplitude (sharp, high-freq peak), Total duration.

- For Bipolar: Measure Peak-to-peak amplitude, Slew rate (max dV/dt), Duration.

- Compute percentage change from baseline for each feature under each channel block condition.

- Output: A table linking specific channel block to directional changes in specific EGM components, informing ML feature selection for drug effect classification.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in EGM-Biophysics Research | Example Product / Model |

|---|---|---|

| High-Density Multipolar Catheter/MEA | Provides spatially precise recording of EGMs for near-field localization and fractionation analysis. | PentaRay NAV Catheter (Biosense Webster), Advisor HD Grid Mapping Catheter (Abbott). |

| Optical Mapping Dye (Voltage-Sensitive) | Validates electrical propagation maps and provides gold-standard conduction velocity independent of electrodes. | RH237, Di-4-ANEPPS. |

| Perfusion System (Langendorff) | Maintains ex vivo heart viability and electrophysiological stability for controlled experiments. | Radnoti Langendorff System. |

| Histology Collagen Stain | Quantifies interstitial fibrosis (key tissue property) for direct correlation with EGM. | Picrosirius Red Stain Kit (Polysciences). |

| Computational Cardiac Electrophysiology Platform | Allows in silico perturbation of tissue properties (CV, fibrosis, ion channels) in isolation to study EGM effects. | OpenCARP (open-source), COMSOL Multiphysics with ACID add-on. |

| Fractionation Analysis Software | Automates detection and quantification of complex, fractionated EGMs (number of peaks, duration, voltage). | LabSystem PRO EP Recording System (Boston Scientific), custom Matlab/Python toolkits. |

Visualization Diagrams

Title: Mapping Tissue Properties to EGM Features

Title: Ex Vivo EGM-Fibrosis Correlation Workflow

Title: In Silico EGM Sensitivity Analysis Protocol

Within the thesis "Advanced EGM Signal Processing for Robust Machine Learning Feature Extraction in Cardiac Safety Pharmacology," accurate identification and mitigation of noise is paramount. Intracardiac electrogram (EGM) signals, crucial for assessing cardiac electrophysiology in preclinical and clinical drug development, are susceptible to corruption by pervasive noise sources. These artifacts can obscure true biological signals, leading to inaccurate feature extraction and compromising machine learning model performance. This document details the characterization and experimental protocols for three predominant noise enemies: Baseline Wander (BW), Powerline Interference (PLI), and Motion Artifact (MA).

The table below summarizes the key attributes of each noise source, essential for designing digital filters and ML denoising algorithms.

Table 1: Quantitative Characterization of Common EGM Noise Sources

| Noise Source | Typical Frequency Range | Amplitude Range | Primary Origin | Key Morphological Feature |

|---|---|---|---|---|

| Baseline Wander (BW) | < 1 Hz | Up to 15% of EGM amplitude | Respiration, electrode-skin impedance changes | Slow, sinusoidal drift of signal isoelectric line. |

| Powerline Interference (PLI) | 50 Hz or 60 Hz (± harmonics) | 10 µV – 5 mV | Capacitive/inductive coupling from AC mains | Persistent sinusoidal oscillation superimposed on signal. |

| Motion Artifact (MA) | 0.1 Hz – 10 Hz | Can exceed EGM amplitude | Physical movement, electrode displacement | Abrupt, non-stationary, high-amplitude transients. |

Experimental Protocols for Noise Induction & Study

Protocol:In-VitroPLI and BW Characterization Setup

Objective: To systematically record and quantify PLI and BW in a controlled benchtop environment simulating clinical recording setups.

Materials: See Scientist's Toolkit (Section 6.0).

Methodology:

- Setup: Place a saline-filled tank (simulating torso conductivity) on a non-conductive surface. Submerge a commercial catheter electrode and a reference Ag/AgCl electrode.

- Signal Generation: Use a programmable signal generator to inject a synthetic cardiac EGM waveform (e.g., mimicking ventricular depolarization) through a pair of dedicated stimulating electrodes.

- PLI Induction: Position a standard AC power cable (120V/60Hz or 230V/50Hz) at varying distances (5-50 cm) from the recording electrodes and data acquisition (DAQ) system cables. Loop the cable to enhance electromagnetic coupling.

- BW Induction: Mechanically oscillate the recording electrode vertically (0.1-0.5 Hz) using a calibrated linear actuator to simulate respiratory-induced electrode motion relative to the medium.

- Data Acquisition: Acquire signals via a biopotential amplifier (gain: 1000, bandwidth: 0.1-500 Hz) and DAQ system (sampling rate: 2 kHz). Record three separate 5-minute epochs: (i) Clean EGM, (ii) EGM + PLI, (iii) EGM + BW.

- Analysis: Compute Power Spectral Density (PSD) to identify peak interference frequencies. Measure signal-to-noise ratio (SNR) as: SNR (dB) = 20 log₁₀(Psignal / Pnoise).

Protocol:In-VivoMotion Artifact Provocation

Objective: To elicit and characterize motion artifacts in an anesthetized preclinical model.

Methodology:

- Animal Preparation: Anesthetize and instrument a canine or swine subject per IACUC-approved protocols. Position a deflectable diagnostic catheter in the right ventricle under fluoroscopic guidance.

- Baseline Recording: Record stable bipolar EGM from the catheter tip for 5 minutes (reference period).

- Artifact Provocation: Implement a series of controlled maneuvers: a. Catheter Tap: Gently tap the catheter shaft proximal to the insertion site. b. Body Roll: Slowly tilt the surgical table approximately 15 degrees left and right. c. Respiration Increase: Adjust ventilator parameters to increase tidal volume by 30% for 60 seconds.

- Synchronized Recording: Synchronize EGM recording (high sampling rate: 4 kHz) with accelerometer data (placed on the animal's torso) and ventilator phase output.

- Analysis: Use accelerometer data to time-lock EGM transients. Characterize MA amplitude, duration, and spectral profile via short-time Fourier transform (STFT).

Visualizing the Noise Identification & Processing Workflow

Diagram Title: EGM Noise Source Identification and Mitigation Pathway for ML

Diagram Title: In-Vitro PLI & BW Characterization Protocol Flow

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Materials for EGM Noise Research

| Item | Function/Application |

|---|---|

| Programmable Signal Generator | Synthesizes pristine, known-parameter cardiac EGM templates for controlled noise addition studies. |

| Biopotential Amplifier (Isolated) | Amplifies microvolt-level EGM signals with high common-mode rejection ratio (CMRR >100 dB) to reject inherent interference. |

| High-Resolution DAQ System | Acquires signals at >= 2 kHz sampling rate to accurately resolve high-frequency noise components and EGM morphology. |

| Saline-Filled Tank/Phantom | Provides a volume conductor model for in-vitro experimentation, allowing reproducible electrode positioning and noise coupling. |

| Diagnostic Electrophysiology Catheter | Standardized tool for intracardiac signal recording; subject to motion and interference in clinical settings. |

| 3-Axis Accelerometer | Synchronously records mechanical motion to establish causality for motion artifact identification. |

| Digital Filtering Software (e.g., LabVIEW, Python SciPy) | Implements and tests noise removal algorithms (e.g., high-pass, notch, adaptive filters) prior to ML pipeline integration. |

Application Notes

Intracardiac electrograms (EGMs) provide critical, high-fidelity electrophysiological data essential for diagnosing arrhythmias, guiding ablation therapy, and assessing drug efficacy. The fundamental characteristics of these signals—including amplitude, frequency, morphology, and complexity—vary systematically based on both the type of arrhythmia (e.g., Atrial Fibrillation/AFib vs. Ventricular Tachycardia/VT) and the anatomical recording site (atrial vs. ventricular myocardium). For research aimed at developing machine learning (ML) features for automated diagnosis and mapping, understanding these variations is paramount. Atrial signals during AFib are characterized by low-voltage, high-frequency, and irregular activations, reflecting chaotic, multi-wavelet reentry. In contrast, ventricular EGMs during VT often show higher amplitude, more organized, and slower periodic signals, consistent with a macro-reentrant or focal mechanism. Site-specific differences are equally critical; atrial myocardium inherently generates faster, lower amplitude signals than ventricular tissue due to electrophysiological and structural properties. These distinctions form the basis for feature engineering in ML pipelines, where time-domain (e.g., voltage, slew rate), frequency-domain (e.g., dominant frequency, organization index), and complexity-based (e.g., entropy, fractal dimension) features must be tailored and validated for the specific clinical context.

Table 1: Characteristic EGM Parameters by Arrhythmia Type and Recording Site

| Parameter | Sinus Rhythm (Atrium) | AFib (Atrium) | Sinus Rhythm (Ventricle) | VT (Ventricle) |

|---|---|---|---|---|

| Voltage Amplitude (mV) | 1.5 - 4.0 | 0.1 - 0.5 | 5.0 - 10.0 | 1.0 - 5.0 |

| Dominant Frequency (Hz) | 5 - 7 | 6 - 12 | 3 - 5 | 3 - 7 |

| Cycle Length (ms) | 600 - 1000 | 100 - 200 | 600 - 1000 | 200 - 400 |

| Slew Rate (V/s) | 0.5 - 1.5 | 0.05 - 0.2 | 1.0 - 3.0 | 0.2 - 1.0 |

| Organization Index | High (0.8-1.0) | Low (0.1-0.3) | High (0.8-1.0) | Medium-High (0.5-0.8) |

| Sample Entropy | Low (<0.5) | High (>1.5) | Low (<0.5) | Medium (0.8-1.2) |

Note: Values are generalized from contemporary literature and may vary based on specific patient pathology, recording electrode type (bipolar/unipolar), and inter-electrode spacing.

Experimental Protocols

Protocol 1: Acquisition of Clinical EGMs for Feature Database Construction

Objective: To collect a standardized dataset of intracardiac EGMs during different arrhythmias from specified sites for ML feature research. Materials: See "Scientist's Toolkit" below. Methodology:

- Patient Preparation & Consent: Obtain IRB approval and informed consent. Perform standard pre-procedure preparations.

- Electrode Catheter Placement: Under fluoroscopic/3D mapping guidance, position diagnostic catheters:

- A decapolar catheter in the coronary sinus (CS) for left atrial/CS recordings.

- A duodecapolar catheter along the right atrial free wall and crista terminalis.

- A quadripolar catheter at the right ventricular apex.

- Signal Acquisition & Arrhythmia Induction:

- Record 60 seconds of baseline sinus rhythm from all catheters.

- For AFib: If the patient is in sinus rhythm, induce AFib via rapid atrial pacing or isoproterenol infusion.

- For VT: Perform programmed electrical stimulation (PES) from the RV apex with up to 3 extra stimuli to induce VT.

- Data Recording: Using the electrophysiology lab system, record unipolar and bipolar EGMs from all catheter electrodes simultaneously with surface ECG leads. Settings: Sampling rate ≥ 1000 Hz, bandpass filter 0.05-500 Hz for unipolar, 30-500 Hz for bipolar.

- Annotation: An expert electrophysiologist will annotate the onset/offset of each arrhythmia episode and label recording sites.

- Export: Export data segments in a standard format (e.g., .mat, .txt) with full metadata.

Protocol 2: In-Silico Simulation of Arrhythmia EGMs

Objective: To generate synthetic EGM data with known ground truth for validating feature robustness. Methodology:

- Model Selection: Use a detailed cardiac tissue model (e.g., Courtemanche-Ramirez-Nattel for atrium, ten Tusscher-Panfilov for ventricle) integrated into a monodomain or bidomain framework.

- Arrhythmia Simulation:

- AFib: Initiate in a 2D or 3D atrial tissue sheet by applying S1-S2 cross-field stimulation or seeding multiple random reentrant wavelets.

- VT: Initiate in a ventricular tissue slab using a rapid pacing protocol or by creating a zone of slowed conduction to establish a reentrant circuit.

- Virtual Electrogram Calculation: Simulate bipolar EGMs by calculating the extracellular potential difference between two points in the model, incorporating electrode size and spacing.

- Parameter Variation: Systematically vary parameters (e.g., fibrosis density, ion channel conductances) to simulate different pathological substrates.

- Noise Addition: Add realistic noise (50/60 Hz interference, baseline wander, myopotential) to the clean simulated signals.

Protocol 3: Feature Extraction and Comparative Analysis Workflow

Objective: To extract, compare, and validate ML-relevant features from EGMs grouped by arrhythmia type and site. Methodology:

- Preprocessing: Apply a notch filter (50/60 Hz). For bipolar signals, apply a high-pass filter (30 Hz). Normalize amplitudes.

- Segmentation: Segment continuous recordings into 5-second non-overlapping epochs labeled by rhythm and site.

- Feature Extraction: For each epoch, calculate a comprehensive feature set:

- Time-Domain: Peak-to-peak voltage, maximal slew rate, local activation time (LAT) variability.

- Frequency-Domain: Dominant frequency (DF), DF organization index (ratio of DF power to total power).

- Complexity: Sample entropy, multiscale entropy, wavelet entropy, fractal dimension.

- Statistical Comparison: Use non-parametric tests (Kruskal-Wallis with post-hoc Dunn's) to compare each feature across the four groups: Atrial-AFib, Atrial-Sinus, Ventricular-VT, Ventricular-Sinus.

- Feature Selection: Apply dimensionality reduction (e.g., PCA) or feature importance ranking (e.g., random forest) to identify the most discriminative features for classifying arrhythmia and site.

Visualizations

Title: EGM Feature Extraction & Analysis Workflow

Title: Factors Determining EGM Characteristics

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for EGM Research

| Item | Function in Research |

|---|---|

| Clinical-Grade Electrophysiology Catheter (e.g., Duodecapolar, PentaRay) | High-density, multi-electrode mapping catheters for acquiring spatially detailed bipolar/unipolar EGMs from specific cardiac chambers. |

| 3D Electroanatomic Mapping System (e.g., CARTO, EnSite) | Provides precise 3D spatial localization of each EGM recording site, enabling correlation of signal features with anatomy. |

| Biophysical Simulation Software (e.g., OpenCARP, COMSOL) | Platforms for running in-silico cardiac tissue models to generate synthetic EGM data with controllable parameters. |

| Signal Processing Toolkit (e.g., MATLAB Wavelet Toolbox, Biosig for Python) | Software libraries containing validated algorithms for filtering, segmenting, and extracting time/frequency/complexity features from EGM signals. |

| Isolated Animal Heart Perfusion System (Langendorff) | Ex-vivo model for recording high-fidelity EGMs from atrial and ventricular tissue during pharmacologically induced arrhythmias. |

| Programmable Electrical Stimulator | Essential for arrhythmia induction protocols in both clinical studies and experimental models. |

| Data Annotation Software (e.g., LabChart, Custom GUI) | Allows expert manual review and labeling of EGM recordings, creating the ground-truth dataset for supervised ML. |

Within electrophysiology research for drug development, intracardiac electrograms (EGMs) are the primary data source for investigating arrhythmia mechanisms and compound effects. Extracting ML-ready features from these signals is a central thesis of modern computational cardiology. This application note establishes that rigorous, high-fidelity preprocessing is the foundational, non-negotiable step determining the validity of all downstream feature engineering and model outcomes. Without it, extracted features represent artifact, not biology.

The High-Fidelity EGM Processing Pipeline: A Protocol

The following protocol details the mandatory steps to transform raw EGM recordings into a curated dataset for feature extraction.

Protocol 1.1: From Raw Acquisition to Cleaned Time-Series Objective: To remove non-cardiac noise and preserve morphologically significant components of the EGM. Materials: Multichannel electrophysiology recording system, isolated animal or human heart preparation, bipolar or unipolar electrodes, data acquisition unit (≥ 1 kHz sampling rate), computational environment (e.g., Python with SciPy/NumPy, MATLAB). Procedure:

- Signal Acquisition: Record EGMs at a minimum sampling frequency of 1 kHz. For ventricular signals or complex fractionated electrograms, 2 kHz or higher is recommended. Ensure proper grounding to minimize 50/60 Hz line interference.

- Digital Filtering: a. High-Pass Filter: Apply a zero-phase Butterworth high-pass filter (order 2-4) with a cutoff at 0.5 Hz to remove baseline wander and very low-frequency drift. b. Low-Pass Filter: Apply a zero-phase Butterworth low-pass filter (order 4-6) with a cutoff at 250 Hz to suppress high-frequency thermal noise and prevent aliasing for subsequent downsampling. c. Notch Filter: If significant line interference persists, apply a narrow band-stop (notch) filter at 50/60 Hz and its first harmonic (100/120 Hz).

- Powerline & Artifact Rejection: Employ adaptive subtraction techniques (e.g., template matching) for large pacing artifacts or mechanical motion artifacts that filters cannot remove without signal distortion.

- Quality Control & Segmentation: Visually inspect cleaned signals. Segment data into individual beats or episodes based on stimulus markers or detected activation times.

Quantitative Impact of Processing on Feature Stability

The table below summarizes experimental data demonstrating how preprocessing fidelity directly affects the coefficient of variation (CV) for common EGM features, a critical metric for ML dataset robustness.

Table 1: Feature Stability as a Function of Preprocessing Rigor

| EGM Feature | Raw Signal CV (%) | With Basic Filtering CV (%) | With High-Fidelity Processing CV (%) | Notes |

|---|---|---|---|---|

| Peak-to-Peak Amplitude (mV) | 35.2 | 18.7 | 8.1 | Highly susceptible to baseline wander. |

| Local Activation Time (ms) | 22.5 | 10.3 | 3.8 | Jitter reduced by precise high-pass filtering. |

| Complex Fractionated Interval (ms) | 45.8 | 30.1 | 15.4 | Uncontrolled noise falsely extends intervals. |

| Spectral Dominant Frequency (Hz) | 40.1 | 25.6 | 12.9 | Line noise creates spurious spectral peaks. |

| Organizational Index (Unitless) | 50.3 | 32.5 | 18.2 | Noise degrades correlation-based metrics severely. |

Experimental Protocol for Validation

Protocol 2.1: Validating Preprocessing Efficacy for ML Objective: To empirically test the hypothesis that classifier performance is dependent on preprocessing quality. Experimental Design:

- Dataset Creation: From a repository of porcine infarct-model EGMs (n=500 recordings), create three datasets:

- Dataset A (Raw): Unprocessed signals.

- Dataset B (Basic): Signals with only 30-250 Hz bandpass filtering.

- Dataset C (High-Fidelity): Signals processed per Protocol 1.1, including adaptive artifact removal.

- Feature Extraction: From each dataset, extract a standardized panel of 20 temporal and spectral features (e.g., from Table 1).

- Model Training & Evaluation: Train a random forest classifier to identify "infarct zone" vs. "healthy zone" EGMs using a 70/30 train-test split. Perform 5-fold cross-validation.

- Metrics: Compare mean accuracy, F1-score, and feature importance rankings across Datasets A, B, and C.

Expected Outcome: Dataset C will yield significantly higher accuracy and F1-score, with feature importance weights that align with known electrophysiological biomarkers, unlike Datasets A and B where importance is skewed by noise-corrupted features.

Visualizing the Critical Workflow & Signal Degradation Pathway

Title: The Critical Data Pathway: High-Fidelity Processing Determines ML Success

Title: Sources of Noise Corrupting the True EGM Signal

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item/Category | Function in EGM Processing & ML Feature Research |

|---|---|

| High-Impedance, Bipolar Electrodes | Minimizes far-field signal pickup, providing a localized EGM critical for detecting discrete pathological signals. |

| Optical Mapping-Compatible Dye (e.g., Di-4-ANEPPS) | Provides gold-standard validation for activation/recovery times derived from electrical EGMs, grounding ML features in biology. |

| Selective Ion Channel Blockers (e.g., E-4031, Dofetilide) | Used to create controlled pharmacological models of Long QT or specific arrhythmias, generating well-labeled EGM data for supervised ML. |

| Programmable Electrical Stimulator | Enforces consistent pacing protocols (S1-S2, burst pacing) to provoke and record repetitive or arrhythmic events for feature analysis. |

| Langendorff Perfusion System (ex-vivo) | Maintains stable, isolated heart preparations for long-duration, low-noise EGM recordings required for training deep learning models. |

| Digital Real-Time Recording Software (e.g., LabChart, EP-Workmate) | Acquires synchronous, high-sample-rate data from multiple electrodes, ensuring temporal alignment of all channels for spatial feature extraction. |

| Signal Processing Suite (e.g., MATLAB Signal Toolbox, Python BioSPPy) | Implements standardized, reproducible digital filters and feature extraction algorithms essential for creating consistent ML inputs. |

Building the Pipeline: Step-by-Step EGM Preprocessing and Feature Engineering for ML Models

Within the broader thesis on Electrogram (EGM) signal processing for machine learning feature research, raw intracardiac signals contain both physiological information and pervasive noise. Effective preprocessing is critical for extracting robust, noise-resistant features for downstream ML models in drug development and electrophysiology research. This protocol details three core digital filtering strategies.

Quantitative Filter Comparison

Table 1: Standard Filter Specifications for Intracardiac EGMs

| Filter Type | Typical Passband/Cutoff Frequencies | Attenuation (Stopband) | Common Filter Order | Primary Application in EGM Processing |

|---|---|---|---|---|

| Band-pass (Butterworth) | 1-300 Hz or 30-300 Hz | ≥ 20 dB at 0.5 Hz & 350 Hz | 4th - 6th | Remove baseline wander & high-frequency EMI. Preserve ventricular/atrial components. |

| Notch (IIR) | 50 Hz or 60 Hz ± 2 Hz | ≥ 40 dB at exact line frequency | 2nd (Q=30-60) | Eliminate powerline interference (50/60 Hz). |

| Adaptive (LMS/NLMS) | Variable, based on reference noise | Dependent on convergence factor μ | N/A (Filter length: 32-64 taps) | Remove in-band noise (e.g., muscle artifact, breathing) where static filters fail. |

| Band-pass (Chebyshev I) | 1-300 Hz | ≥ 50 dB at 0.1 Hz & 500 Hz | 5th - 8th | Steeper roll-off for high-noise environments. Accepts passband ripple. |

| Savitzky-Golay (Smoothing) | N/A (Polynomial fitting) | N/A | Window: 5-21 pts, Poly: 3-5 | Preserve peak morphology while smoothing high-frequency noise. |

Table 2: Performance Metrics on Simulated EGM Data (Signal-to-Noise Ratio Improvement)

| Filter Type | Input SNR (dB) | Output SNR (dB) | Artifact Introduced | Computational Load (Relative) |

|---|---|---|---|---|

| Butterworth Band-pass | 10 | 18 | Low (phase distortion minimal with forward-backward) | Low |

| IIR Notch (60 Hz) | 10 (with line noise) | 22 | Moderate (risk of signal ringing) | Very Low |

| Adaptive LMS | 5 (non-stationary noise) | 15 | Low (if reference appropriate) | High |

| No Filtering | 10 | 10 | None | None |

Experimental Protocols

Protocol 3.1: Band-pass Filtering for Baseline EGM Cleanup

Objective: Remove out-of-band noise to isolate the cardiac signal of interest (typically 1-300 Hz).

Materials: Raw unipolar or bipolar EGM time-series data (sampled at ≥ 1 kHz). Software: MATLAB (Signal Processing Toolbox), Python (SciPy), or LabVIEW.

Method:

- Specification: Define passband

f_low = 1 Hz,f_high = 300 Hz. For atrial signals, considerf_low = 30 Hz. - Design: Use a 5th-order zero-phase Butterworth filter (to prevent phase distortion).

- In MATLAB:

[b,a] = butter(5, [f_low f_high]/(fs/2), 'bandpass'); - In Python:

from scipy.signal import butter, filtfilt; b, a = butter(5, [f_low, f_high], btype='band', fs=fs)

- In MATLAB:

- Application: Apply using forward-backward filtering (

filtfilt). - Validation: Plot Power Spectral Density (PSD) pre- and post-filtering. Confirm attenuation outside passband.

Protocol 3.2: Notch Filtering for Powerline Interference

Objective: Attenuate 50/60 Hz line noise and its harmonics without distorting EGM morphology.

Method:

- Detection: Perform FFT on a representative signal segment to confirm exact noise frequency (often 60.0 Hz ± 0.1 Hz).

- Design: Use a 2nd-order IIR notch filter with a quality factor (Q) of 35.

- In MATLAB:

wo = 60/(fs/2); bw = wo/35; [b,a] = iirnotch(wo, bw);

- In MATLAB:

- Application: Apply using

filtfilt. - Validation: Inspect time-domain signal for removal of 60 Hz oscillation and check PSD for a clear notch.

Protocol 3.3: Adaptive Noise Cancellation for In-Band Artifacts

Objective: Remove noise (e.g., electromyographic) with frequency overlap with the cardiac signal.

Method:

- Reference Signal: Obtain a noise reference, either from a separate accelerometer/EMG channel or derived from the primary signal (e.g., using a separate high-pass filtered version >100 Hz).

- Algorithm Setup: Implement Normalized Least Mean Squares (NLMS) adaptive filter.

- Filter length (L): 32 taps.

- Step size (μ): 0.01 (normalized).

- Iteration: Allow the filter weights to converge over a training segment (≥ 500 ms).

- Output: The filter output is the "clean" EGM. The error signal is the noise estimate.

- Validation: Compare the autocorrelation of the output signal with the raw; should show cleaner periodic peaks.

Visualization of Workflows

Title: Sequential EGM Preprocessing Filtering Workflow

Title: Adaptive Noise Cancellation System Block Diagram

The Scientist's Toolkit

Table 3: Essential Research Reagents & Solutions for EGM Filtering Experiments

| Item Name | Function/Application in Protocol | Example Product/Specification |

|---|---|---|

| Programmable Electrophysiology Amplifier/DAQ | Acquire raw, high-fidelity intracardiac signals with adjustable gain. Essential for all protocols. | Intan RHD Series, ADInstruments PowerLab, Blackrock Microsystems CerePlex. |

| Ag/AgCl Electrodes (Epicardial or Intracardiac) | Provide stable, low-noise electrical interface for EGM recording. | Plastics One EEG/ECG electrodes, bipolar/multipolar EP catheters. |

| Physiological Saline (0.9% NaCl) or Krebs-Henseleit Solution | Maintain tissue viability during ex-vivo or animal model EGM recordings. | Sigma-Aldrich, prepared with 5.6 mM Glucose, gassed with 95% O2/5% CO2. |

| Signal Processing Software License | Implement and validate filtering algorithms. | MATLAB + Signal Processing Toolbox, Python (SciPy, NumPy, MNE-Python). |

| Synthetic EGM & Noise Dataset | Benchmark filter performance with known ground truth. | MIT-BIH Arrhythmia Database, simulated noisy EGMs (e.g., with added 50/60 Hz sinusoid, EMG noise). |

| Line Noise Simulator/Injector | Calibrate notch filters by introducing known interference. | Function generator (e.g., Rigol DG1022Z) coupled via a non-invasive transformer. |

| Computational Environment | Run adaptive filters in real-time or offline. Requires predictable timing. | Desktop with multicore CPU (Intel i7/equivalent), ≥16 GB RAM, Real-time OS extension (e.g., Ubuntu with PREEMPT_RT). |

Within the broader thesis on electrogram (EGM) signal processing for machine learning (ML) feature extraction, the reproducibility and biological relevance of derived features depend critically on a standardized preprocessing workflow. Following initial denoising and filtering, Workflow 2 addresses the challenges of signal heterogeneity by implementing structured segmentation, temporal alignment, and amplitude normalization. This protocol details the application notes for these techniques to ensure consistent analysis across multi-electrode arrays, subjects, and experimental conditions for downstream ML model training in cardiac electrophysiology and drug development research.

Core Techniques: Application Notes

Segmentation

Segmentation isolates discrete physiological events from continuous EGM recordings. For ML, consistent event windows are essential for feature comparison.

Protocol: R-Peak and Activation Window Segmentation

- Input: Filtered bipolar or unipolar EGM signals.

- R-Peak Detection: Apply the Pan-Tompkins algorithm or a similar QRS detector to a surface ECG channel or a representative EGM channel.

- Algorithm parameters (e.g., refractory period, threshold) must be fixed for an entire dataset.

- Activation Time (AT) Detection: For intracardiac EGMs, identify local activation within a search window (e.g., -30 ms to +50 ms) around the R-peak.

- Method: Use maximum -dV/dt for unipolar signals or maximum absolute amplitude for bipolar signals.

- Segment Extraction: Extract a window of fixed duration around each fiducial point (R-peak or AT).

- Example Window: -50 ms to +150 ms relative to fiducial point.

- Segments containing noise or ectopic beats (detected via aberrant RR intervals) should be tagged and optionally excluded.

Table 1: Segmentation Algorithm Performance Metrics

| Algorithm | Target | Sensitivity (%) | Positive Predictivity (%) | Computational Cost (ms/beat) |

|---|---|---|---|---|

| Pan-Tompkins | R-Peak | 99.3 | 99.7 | ~1.2 |

| Wavelet-Based | R-Peak | 99.5 | 99.6 | ~4.8 |

| Maximum -dV/dt | Unipolar AT | N/A | N/A | ~0.5 |

| Peak Bipolar | Bipolar AT | N/A | N/A | ~0.3 |

Alignment

Temporal alignment corrects for small temporal jitter between recorded activations of the same event, ensuring features are compared at equivalent physiological phases.

Protocol: Dynamic Time Warping (DTW) for EGM Alignment

- Input: Segmented EGM beats for a single channel across multiple cycles.

- Template Selection: Select the median beat or a visually representative, noise-free beat as the template.

- Warping Path Calculation:

- Compute a cost matrix between the template and a target beat.

- Find the optimal warping path that minimizes the cumulative distance, subject to step pattern constraints (e.g., Sakoe-Chiba band).

- Application: Apply the derived warping path to the target beat to align its time axis to the template.

- Iteration: Repeat for all beats and all channels. Alignment should be performed channel-wise.

Normalization

Normalization scales signal amplitudes to a common range, reducing inter-subject and inter-recording variability not attributable to the experimental condition.

Protocol: Baseline-Corrected Peak-to-Peak Normalization

- Input: Aligned EGM segments.

- Baseline Correction: For each segment, calculate the mean amplitude of a pre-activation baseline period (e.g., -50 ms to -10 ms prior to AT). Subtract this value from the entire segment.

- Scale Calculation: Identify the absolute peak-to-peak amplitude of the baseline-corrected segment.

- Normalization: Divide the entire baseline-corrected segment by the peak-to-peak amplitude. Resulting values typically range from -1 to 1.

- Alternative: Z-score normalization using the mean and standard deviation of the segment's baseline period may be used for certain spectral features.

Table 2: Impact of Normalization on Feature Variance

| Feature | Raw Signal (Mean ± SD) | Post-Normalization (Mean ± SD) | % Reduction in SD |

|---|---|---|---|

| Peak Amplitude (mV) | 2.5 ± 1.8 | 1.0 ± 0.1 | 94.4% |

| Integral (mV·ms) | 45.3 ± 32.1 | 18.2 ± 2.3 | 92.8% |

| Duration at 50% (ms) | 12.4 ± 3.1 | 12.4 ± 3.1 | 0% |

Integrated Preprocessing Workflow Diagram

Title: EGM Preprocessing Workflow 2: Segmentation, Alignment, Normalization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for EGM Preprocessing & Analysis

| Item | Function in Workflow |

|---|---|

| High-Density Mapping System (e.g., Prucka Cardiolab, EP-Workmate) | Acquires raw, multichannel EGM and surface ECG signals with precise temporal synchronization. |

| Signal Processing Suite (MATLAB with Signal Processing Toolbox, Python SciPy/NumPy) | Provides algorithmic foundation for implementing custom segmentation, DTW, and normalization code. |

| Open-Source ECG Toolbox (e.g., WFDB Toolbox, BioSPPy) | Offers tested implementations of standard detectors (Pan-Tompkins) for validation and benchmarking. |

| Annotation Software (e.g., LabChart, Custom GUI) | Enables manual verification and correction of automated fiducial point (AT) detection. |

| Computational Environment (Jupyter Notebook, MATLAB Live Script) | Allows for interactive, step-by-step development and documentation of the preprocessing pipeline. |

Experimental Validation Protocol

Title: Protocol for Validating Preprocessing Workflow Efficacy on Simulated and Clinical EGM Data

Objective: To quantify the reduction in signal variance and improvement in ML feature discriminability achieved by Workflow 2.

Materials:

- Dataset A: Simulated EGM signals with known temporal jitter and amplitude variation.

- Dataset B: Clinical high-density EGMs from 10 patients (atrial fibrillation ablation procedure).

- Software: Custom Python/Matlab scripts implementing Workflow 2.

Methods:

- Apply Workflow: Process both datasets through the sequential steps: Segmentation -> Alignment -> Normalization.

- Quantify Variance: For Dataset A, measure the standard deviation of activation timing and peak amplitude before and after alignment/normalization.

- Feature Extraction: From Dataset B, extract 5 common ML features (e.g., RMS voltage, dominant frequency, complexity index) from both raw and preprocessed signals.

- Assess Discriminability: Using labeled regions (sinus rhythm vs. arrhythmia), calculate the Fisher Score or t-statistic for each feature pre- and post-processing to measure between-class separation.

- Statistical Analysis: Perform paired t-tests on the variance metrics and discriminability indices.

Expected Outcome: A significant reduction in within-class variance and a significant increase in feature discriminability scores post-preprocessing, confirming the workflow's utility for robust ML feature preparation.

Within a broader thesis on electrogram (EGM) signal processing for deriving machine learning-ready features, this protocol addresses two critical preprocessing challenges: the removal of non-physiological artifacts (e.g., motion, pacing) and the suppression of far-field ventricular (FFV) signals from atrial EGMs. Clean atrial substrate characterization is paramount for applications in atrial fibrillation research, drug efficacy studies, and ablation target identification.

Core Signal Processing Algorithms & Quantitative Comparisons

Artifact Removal Methods

Artifacts are typically transient, high-amplitude, broad-spectrum disturbances.

Table 1: Comparative Performance of Artifact Removal Techniques

| Method | Core Principle | Optimal Use Case | Atrial Signal Preservation (Reported SNR Improvement) | Computational Load |

|---|---|---|---|---|

| Template Subtraction | Average artifact waveform is subtracted from detected events. | Regular pacing artifacts, catheter knock. | High (8-12 dB) | Low |

| Wavelet Denoising | Thresholding of wavelet coefficients in artifact-dominated scales. | Non-stationary, sharp artifacts. | Moderate (6-10 dB) | Medium |

| Adaptive Filtering (RLS/NLMS) | Uses a reference channel (e.g., pacing signal) to predict & cancel artifact. | Reference-correlated artifacts. | High (10-15 dB) | High |

| Blank-and-Interpolate | Simple replacement of artifact-contaminated segments. | Simple, large-amplitude spikes. | Low (Potential signal loss) | Very Low |

Far-Field Ventricular (FFV) Signal Cancellation

FFV signals represent ventricular depolarization (QRS) obscuring atrial electrograms.

Table 2: FFV Removal Algorithm Comparison

| Algorithm | Key Inputs | Advantages | Limitations (Reported Residual FFV) |

|---|---|---|---|

| Independent Component Analysis (ICA) | Multi-channel EGMs (≥3). | Blind separation, no timing reference needed. | Channel count requirement, ordering ambiguity (≈15% residual). |

| Spatial Cancellation (e.g., V-subtraction) | A unipolar EGM and a coincident ventricular reference. | Intuitive, computationally simple. | Requires precise temporal alignment (<5% residual). |

| Adaptive Template Subtraction | Atrial EGM and QRS template from ventricular channel. | Effective for consistent FFV morphology. | Fails with variable conduction (≈10% residual). |

| Common Average Referencing | All electrodes on an array. | Reduces common-mode signals (FFV). | Also attenuates common-mode atrial signals. |

Experimental Protocols

Protocol for Validation of Artifact Removal

Title: In-silico & In-vitro Validation of Artifact Filters

Materials:

- Source Data: High-resolution atrial EGMs (e.g., from CARTO or Ensite systems) during sinus rhythm and pacing.

- Artifact Simulation: Clean EGMs are synthetically contaminated with modeled pacing artifacts (monophasic/biphasic pulses) or motion artifact templates.

- Ground Truth: The original, clean EGM segment.

Method:

- Data Segmentation: Isolate episodes with and without artifacts. Annotate artifact onset/offset.

- Algorithm Application: Apply each method from Table 1 to the contaminated signal.

- Performance Quantification:

- Calculate Signal-to-Noise Ratio (SNR) before and after processing:

SNR = 20*log10(RMS(signal) / RMS(noise)). - Compute Root Mean Square Error (RMSE) between processed signal and the ground truth clean EGM.

- Visually inspect for atrial signal distortion (e.g., alteration of fractionated electrogram morphology).

- Calculate Signal-to-Noise Ratio (SNR) before and after processing:

Protocol for FFV Removal Efficacy Assessment

Title: Quantifying Atrial Substrate Revelation Post-FFV Cancellation

Materials:

- Recordings: Simultaneous unipolar/bipolar atrial EGMs and a clear ventricular reference (e.g., surface ECG lead II or intracardiac RV electrogram).

- Annotation: Precise fiducial markers for atrial (P-wave) and ventricular (R-wave) activations.

Method:

- Alignment: Temporally align ventricular reference to atrial channels using cross-correlation.

- FFV Cancellation: Apply chosen FFV removal algorithm (e.g., Spatial Cancellation): a. For each ventricular event, segment the corresponding FFV in the atrial EGM. b. Scale and subtract the ventricular reference template from the atrial channel. c. Interpolate the subtracted segment to maintain continuity.

- Analysis:

- Amplitude Analysis: Measure peak-to-peak atrial EGM amplitude in the P-wave region before and after FFV removal.

- Spectral Analysis: Compute power spectral density (0-100 Hz) to observe reduction in ventricular-dominated frequencies (~5-20 Hz).

- Feature Stability: Calculate stability of machine learning features (e.g., Shannon entropy, dominant frequency) across consecutive cycles post-processing.

Visualization of Workflows

Title: Atrial EGM Preprocessing: Artifact & FFV Removal Pipeline

Title: Decision Workflow for Far-Field Ventricular Cancellation

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for EGM Preprocessing Studies

| Item / Solution | Function in Protocol | Example/Notes |

|---|---|---|

| High-Resolution Electrophysiology System | Acquisition of raw, multi-channel intracardiac EGMs. | Biosemi, EP-Workmate, CARTO 3. Provides digital data export (e.g., .txt, .mat). |

| Signal Processing Software Library | Implementation of algorithms (filtering, ICA, wavelet). | MATLAB with Signal Processing Toolbox, Python (SciPy, PyWavelets, MNE). |

| Synthetic EGM Generator | Creates ground truth data with controlled artifacts/FFV. | In-house or commercial simulators (e.g., MIT-BIH Arrhythmia Generator). |

| Pre-annotated Public EGM Database | For benchmarking and validation. | PhysioNet Computing in Cardiology Challenges data (e.g., 2020/2021 AF events). |

| Precision Timing Alignment Tool | Micro-adjustment of ventricular reference latency. | Cross-correlation peak detection algorithms with sub-sample interpolation. |

| Feature Extraction Suite | Quantifies outcome of preprocessing for ML. | Custom scripts for calculating complex fractionated atrial electrogram (CFAE) indices, organizational metrics. |

This document details application notes and protocols for extracting time-domain and amplitude features from Electrogram (EGM) signals. This work is a foundational component of a broader thesis on EGM signal processing for machine learning-based cardiac electrophysiology research. The primary goal is to generate robust, quantifiable features that can discriminate between healthy and pathological tissue substrates, thereby enabling applications in drug efficacy testing, ablation target identification, and arrhythmia mechanism characterization.

Core Feature Definitions & Quantitative Summaries

Voltage-Based Features

Voltage features quantify the amplitude characteristics of the EGM, reflecting tissue viability and depolarization strength.

Table 1: Core Voltage-Domain Features

| Feature Name | Mathematical Definition | Physiological Correlation | Typical Normal Range (Bipolar, Peak-to-Peak) | Pathological Threshold | ||

|---|---|---|---|---|---|---|

| Peak-to-Peak Voltage (Vpp) | ( V_{pp} = \max(S(t)) - \min(S(t)) ) | Tissue viability, mass of activating myocytes. | 1.5 - 5.0 mV | < 0.5 mV (scar) | ||

| Root Mean Square Voltage (VRMS) | ( V{RMS} = \sqrt{\frac{1}{N} \sum{i=1}^{N} S_i^2} ) | Overall signal energy. | 0.2 - 1.2 mV | < 0.1 - 0.15 mV | ||

| Peak Negative Voltage (Vmin) | ( V_{min} = \min(S(t)) ) | Local activation amplitude. | -0.5 to -2.5 mV | > -0.5 mV | ||

| Average Absolute Voltage (Vabs) | ( V{abs} = \frac{1}{N} \sum{i=1}^{N} | S_i | ) | Mean rectified amplitude. | 0.1 - 0.8 mV | Context-dependent |

Complexity & Fractionation Indices

These features describe the morphology and temporal fragmentation of the EGM, indicative of discontinuous, anisotropic conduction.

Table 2: Complexity & Fractionation Features

| Feature Name | Calculation Protocol | Interpretation | Normal Value | High Fractionation Value |

|---|---|---|---|---|

| Number of Peaks (NP) | Count of local extrema exceeding noise threshold (±0.05 mV). | Direct measure of temporal fragmentation. | 1-3 | ≥ 4 |

| Short-Term Fractionation (STF) | ( \frac{\text{NP}}{\text{EGM Duration (ms)}} ) | Peaks per unit time. | < 0.1 peaks/ms | > 0.15 peaks/ms |

| Complex Fractionated Electrogram (CFE) Mean | Average interval between consecutive detected peaks. | Inverse of peak frequency. | > 120 ms | < 70 ms |

| CFE Standard Deviation | Std. dev. of inter-peak intervals. | Regularity of fractionation. | Low | High (irregular) |

| Shannon Entropy (SE) | ( SE = -\sum pi \log2(p_i) ) for binned signal amplitudes. | Signal unpredictability & disorder. | Low (< 2.5) | High (≥ 3.0) |

Experimental Protocols for Feature Extraction

Protocol: Acquisition & Preprocessing for Feature Engineering

Objective: Obtain clean, physiological EGM signals suitable for time-amplitude analysis. Materials: See "The Scientist's Toolkit" below. Procedure:

- Signal Acquisition: Acquire bipolar EGMs from mapping system (e.g., CARTO, EnSite). Ensure contact force is stable (>5g). Sampling rate ≥ 1 kHz (recommended 2 kHz).

- Bandpass Filtering: Apply a 4th-order Butterworth bandpass filter (30-500 Hz) to remove far-field activity and high-frequency noise.

- Notch Filtering (Optional): Apply a 50/60 Hz notch filter if line noise is present.

- Baseline Wander Removal: Apply a high-pass filter at 1 Hz or use polynomial/spline fitting and subtraction.

- Signal Trimming: Isolate a 2-second window or specific number of beats. For beat-specific features, window around a fiducial point (e.g., V-peak in unipolar).

- No Floor Estimation: Calculate noise floor from isoelectric segment. Define amplitude threshold as 3× RMS noise.

- Output: Preprocessed EGM snippet ready for feature computation.

Protocol: Automated Computation of Fractionation Indices

Objective: Calculate NP, CFE Mean, and CFE Standard Deviation reproducibly. Input: Preprocessed EGM signal (S). Algorithm:

- Peak Detection: a. Identify all local maxima and minima in S. b. Apply amplitude threshold: Discard extrema where |amplitude| < (0.05 mV OR 3× noise floor). c. Apply temporal threshold: Merge extrema occurring within a refractory period (e.g., 15 ms).

- Peak Validation: Count the final set of validated peaks (NP).

- Inter-Peak Interval (IPI) Calculation: Compute the time difference between consecutive peaks (maxima or minima).

- CFE Metrics: a. CFE Mean: ( \text{CFE}{\text{mean}} = \frac{1}{M} \sum{j=1}^{M} IPIj ), where M is the number of intervals. b. CFE Standard Deviation: ( \text{CFE}{\text{SD}} = \sqrt{\frac{1}{M} \sum{j=1}^{M} (IPIj - \text{CFE}_{\text{mean}})^2 } ).

- Output: NP, CFE Mean (ms), CFE SD (ms).

Visualizations

Title: EGM Feature Extraction Workflow

Title: Feature Engineering in Broader Thesis Context

The Scientist's Toolkit

Table 3: Essential Research Reagents & Materials

| Item | Function in EGM Feature Research | Example/Specification |

|---|---|---|

| Clinical Electrophysiology System | Acquires raw, high-fidelity intracardiac EGMs. | CARTO 3 (Biosense Webster), EnSite Precision (Abbott). |

| High-Resolution Mapping Catheter | Provides the bipolar electrode pairs for EGM recording. | PentaRay (Biosense Webster), Advisor HD Grid (Abbott). |

| Signal Processing Software (Library) | Implements filtering, peak detection, and feature algorithms. | MATLAB Signal Processing Toolbox, Python (SciPy, NumPy). |

| Digital Filter Set | Removes noise and artifacts to isolate local EGM components. | Butterworth Bandpass (30-500 Hz), Notch (50/60 Hz). |

| Peak Detection Algorithm | Identifies local deflections for complexity analysis. | Custom script with amplitude/refractory thresholds. |

| Validation Phantom/Simulator | Bench-testing of feature accuracy using known signals. | ECG/EGM signal simulator with programmable complexity. |

| Database Management System | Stores raw signals, computed features, and patient metadata. | SQL database, MATLAB .mat structures, HDF5 files. |

Within the broader thesis on Electrogram (EGM) signal processing for machine learning feature research, the extraction of robust, physiologically relevant features is paramount. While time-domain features capture amplitude and timing, they are insufficient for characterizing the complex, non-stationary nature of cardiac arrhythmias. Spectral and time-frequency features, derived from transformations like the Discrete Fourier Transform (DFT) and Wavelet Transforms, provide a critical lens into the frequency content and its temporal evolution. These features are hypothesized to be potent discriminators for substrate characterization, therapy efficacy assessment in drug development, and arrhythmia risk stratification in preclinical and clinical research.

Core Spectral & Time-Frequency Feature Definitions

Discrete Fourier Transform (DFT) & Derived Features

The DFT decomposes a finite-length EGM signal segment into its constituent sinusoidal frequency components. For a discrete signal x[n] of length N, the DFT X[k] is: X[k] = Σ_{n=0}^{N-1} x[n] * e^{-j(2π/N)kn}, for k = 0, 1, ..., N-1. From the power spectral density (PSD, S[k] = |X[k]|²), key features are extracted.

Table 1: Key Spectral Features from DFT/PSD

| Feature | Mathematical Definition | Physiological Interpretation in EGM |

|---|---|---|

| Dominant Frequency (DF) | argmax_k (S[k]) | The peak frequency of depolarization; high DF often indicates rapid, organized sources (e.g., rotor cores) or rapid focal activity. |

| Organizational Index (OI) | Σ_{k∈BW} S[k]² / (Σ_{k∈BW} S[k])² | Quantifies concentration of power; higher OI suggests more periodic, organized activity. |

| Spectral Concentration (SC) | Σ_{k=f1}^{f2} S[k] / Σ_{k=0}^{fNyq} S[k] | Fraction of power within a band (e.g., 4-9 Hz for AF); indicates prevalence of pathologic frequencies. |

| Spectral Entropy | - Σ_{k∈BW} p_k log₂(p_k) where p_k=S[k]/ΣS | Measure of spectral randomness; high entropy suggests disorganized, complex activation. |

| Normalized Power in Bands | P_{band} / P_{total} | Power in predefined bands (e.g., 0-2 Hz: slow, 2-8 Hz: medium, 8-20 Hz: fast). |

Wavelet Transform & Time-Frequency Features

The Continuous Wavelet Transform (CWT) provides a time-frequency representation, crucial for non-stationary EGM analysis. CWT(a,b) = (1/√|a|) ∫ x(t) ψ((t-b)/a) dt, where *ψ is the mother wavelet, a is scale (inverse of frequency), and b is translation (time). Discrete Wavelet Transform (DWT) uses dyadic scaling for efficient decomposition into approximation (low-frequency) and detail (high-frequency) coefficients.

Table 2: Key Time-Frequency Features from Wavelet Analysis

| Feature | Description | Application in EGM Analysis |

|---|---|---|

| Wavelet Energy per Band | Energy of DWT detail coefficients at each decomposition level. | Tracks shifts in spectral content over time (e.g., transient high-frequency bursts). |

| Wavelet Entropy | Entropy calculated from the relative energy distribution across wavelet scales. | Quantifies temporal stability of signal organization. |

| Ridge Extraction | Tracking the scale (frequency) of maximum CWT magnitude over time. | Identifies the instantaneous dominant frequency trajectory. |

| Time-Dependent Spectral Peak | The peak frequency in the CWT magnitude spectrum at each time point. | Maps focal accelerations or wavebreak occurrences. |

Experimental Protocols for Feature Extraction

Protocol: DFT-Based Feature Extraction from Intracardiac EGMs

Objective: Compute standardized spectral features from unipolar or bipolar EGM recordings for substrate classification. Materials: See Scientist's Toolkit. Preprocessing Steps:

- Signal Selection: Isolate a 4-second stable recording segment (avoiding pacing artifacts or far-field intervals).

- Detrending: Apply a high-pass filter (cutoff: 0.5 Hz) or subtract a least-squares linear fit to remove baseline wander.

- Windowing: Apply a Hanning window to the segment to mitigate spectral leakage.

- Zero-Padding: Zero-pad the signal to the next power of two to increase frequency resolution. DFT Computation & Feature Extraction:

- Compute the FFT (fast implementation of DFT) on the preprocessed segment.

- Calculate the single-sided PSD. For sampling frequency Fs, the frequency vector resolves up to Fs/2.

- Identify the Dominant Frequency (DF) as the frequency bin with the maximum PSD magnitude in the 3-20 Hz range (valid for atrial/ventricular arrhythmias).

- Calculate Organizational Index (OI) and Spectral Entropy using the PSD values within the 3-20 Hz band.

- Compute Normalized Power in the Slow (3-5 Hz), Medium (5-8 Hz), and Fast (8-20 Hz) bands. Output: A feature vector [DF, OI, Spectral Entropy, Pslow, Pmedium, P_fast] for each EGM segment.

Protocol: Time-Frequency Analysis Using the Continuous Wavelet Transform

Objective: Characterize the temporal evolution of spectral content in complex fractionated EGMs. Preprocessing: Follow steps 1-2 from Protocol 3.1. CWT Computation:

- Mother Wavelet Selection: Choose the complex Morlet wavelet (

cmorin MATLAB/Python'spywt) for an optimal balance between time and frequency localization. - Scale Setup: Define scales linearly corresponding to frequencies from 1 Hz to Fs/2. Use at least 128 scales.

- CWT Execution: Compute the CWT, resulting in a complex matrix

W(a,b). Feature Extraction: - Compute the scalogram (magnitude of

W(a,b)squared). - Ridge Extraction: For each time point b, find the scale a that maximizes the scalogram magnitude. Convert scale to instantaneous frequency.

- Statistical Summaries: Calculate the mean, standard deviation, and skewness of the instantaneous dominant frequency over the 4-second window.

- Wavelet Entropy: Compute the total energy at each scale, normalize to a probability distribution, and calculate Shannon entropy. Output: A feature vector [Mean iDF, Std iDF, Skew iDF, Wavelet Entropy].

Title: Workflow for Spectral & Time-Frequency Feature Extraction from EGMs

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Tools for EGM Spectral Feature Research

| Item/Category | Example Product/Solution | Function in Research |

|---|---|---|

| High-Fidelity Data Acquisition | ADInstruments PowerLab, Intan RHD Recording System | Provides low-noise, high-resolution (≥1 kHz sampling) analog-to-digital conversion of raw analog EGMs. |

| Signal Processing Software Library | MATLAB Wavelet Toolbox, Python (SciPy, PyWavelets, NumPy) | Platforms for implementing DFT, CWT/DWT, and custom feature extraction algorithms. |

| Mother Wavelet for CWT | Complex Morlet Wavelet (cmor) | Provides a good trade-off between time and frequency resolution for biological signals. |

| Spectral Analysis Plugin | LabChart Pro ECG Analysis Module, EMKA iox2 | Commercial software offering built-in FFT and time-frequency analysis for rapid prototyping. |

| Validated Preprocessing Filters | Butterworth or Chebyshev IIR Digital Filters | Removes line noise (e.g., 50/60 Hz notch) and baseline wander without distorting signal content. |

| Reference Datasets | PhysioNet Computing in Cardiology Challenge Datasets, Custom Preclinical Porcine AF Models | Benchmarked, annotated EGM data for validating and comparing feature performance. |

Application Notes for Drug Development & Research

Quantifying Anti-Arrhythmic Drug (AAD) Effects

Use Case: Assess acute electrophysiological effect of a novel AAD on atrial fibrillation substrate. Protocol Adaptation:

- Baseline Recording: Acquire high-density epicardial or endocardial EGMs during induced AF in preclinical model.

- Post-Dose Recording: Acquire EGMs at peak plasma concentration of the compound.

- Feature Extraction: Apply Protocol 3.1 to multiple (e.g., 100) consecutive 4-second segments from both baseline and post-dose states.

- Statistical Analysis: Perform paired statistical testing (e.g., Wilcoxon signed-rank) on extracted features (e.g., Dominant Frequency, Spectral Entropy). Expected Outcome: An effective AAD targeting atrial remodeling may significantly reduce Dominant Frequency and increase Organizational Index, indicating slowed and more organized activity.

Identifying Ablation Targets via Time-Frequency Signatures

Use Case: Use wavelet-based features to identify sites of persistent high-frequency drivers. Protocol Adaptation:

- High-Density Mapping: Acquire EGMs from a grid/multi-electrode array during sustained arrhythmia.

- Feature Mapping: For each electrode site, compute the Mean Instantaneous Dominant Frequency (from Protocol 3.2) and Wavelet Entropy.

- Spatial Visualization: Create contour maps (feature maps) overlaid on anatomical geometry. Interpretation: Sites exhibiting persistently high Mean iDF with low Wavelet Entropy are candidate locations for stable rotational or focal sources.

Title: Integration of Spectral Features into EGM ML Research Pipeline

Application Notes and Protocols

Within a broader thesis on EGM signal processing for machine learning features research, quantifying signal complexity and organization is paramount for distinguishing pathological from physiological cardiac rhythms. Traditional linear features (e.g., amplitude, frequency) often fail to capture the intricate, non-linear dynamics of atrial and ventricular arrhythmias. This document details the application of non-linear and entropy-based features to intracardiac electrograms (EGMs) and surface ECGs.

1. Theoretical Foundation and Feature Definitions

Non-linear dynamics and information theory provide metrics to quantify the unpredictability, randomness, and complexity of a time series signal like an EGM.

Table 1: Key Non-Linear and Entropy-Based Features for EGM Analysis

| Feature | Mathematical Basis | Physiological Interpretation (in EGM context) | Typical Value Range (Normal Sinus Rhythm vs. Fibrillation) |

|---|---|---|---|

| Sample Entropy (SampEn) | Negative natural logarithm of the conditional probability that two sequences similar for m points remain similar at the next point (m+1). | Measures signal irregularity. Lower values indicate more self-similarity/regularity. | NSR: Lower (e.g., 0.5-1.2). AF/VF: Higher (e.g., 1.5-2.5). |

| Multiscale Entropy (MSE) | SampEn calculated over multiple temporal scales via coarse-graining. | Assesses complexity across different time scales. Healthy systems show high complexity across scales. | NSR: Entropy remains relatively high across scales. AF/VF: Entropy decays rapidly with scale. |

| Detrended Fluctuation Analysis (DFA) α-exponent | Quantifies long-range power-law correlations in a non-stationary signal. | α ~0.5: white noise (e.g., VF). α ~1.0: 1/f noise (healthy). α ~1.5: Brownian noise. | NSR: α ~0.8-1.2. AF: α ~0.5-0.8. VF: α ~0.5. |

| Lyapunov Exponent (λ) | Average rate of separation of infinitesimally close trajectories in state space. | Quantifies sensitivity to initial conditions (chaos). Positive λ suggests chaotic dynamics. | NSR: Near zero or slightly negative. Sustained AF/VF: Positive (e.g., 0.05-0.3 bits/s). |

| Lempel-Ziv Complexity (LZC) | Estimates the number of distinct substrings and their rate of occurrence. | Measures complexity in terms of compressibility. More complex = less compressible. | NSR: Lower complexity (~0.1-0.3). AF/VF: Higher complexity (~0.4-0.7). |

2. Experimental Protocol: Feature Extraction from High-Resolution EGMs

Objective: To compute a standardized panel of non-linear features from unipolar/bipolar intracardiac EGMs to classify arrhythmia substrates.

Materials & Reagents:

- Electrophysiology Recording System: (e.g., Labsystem Pro, EP-Workmate) with bandwidth 0.05-500 Hz.

- Catheter: Diagnostic electrophysiology catheter (e.g., duodecapolar, PentaRay).

- Signal Acquisition: Analog-to-digital converter (ADC) with ≥ 1 kHz sampling rate (≥ 2 kHz recommended).

- Reference Electrode: Surface ECG electrodes.

- Software: MATLAB (with Signal Processing Toolbox) or Python (SciPy, NumPy, nolds, antropy packages).

- Data: 60-second epochs of stable rhythm (e.g., Sinus Rhythm, Atrial Flutter, Atrial Fibrillation).

Protocol:

- Signal Acquisition & Preprocessing:

- Acquire EGM signals from targeted cardiac chambers.

- Apply a 0.5-250 Hz bandpass filter to remove baseline wander and high-frequency noise.

- For bipolar EGMs, ensure consistent inter-electrode spacing and orientation.

- Downsample to a standardized sampling frequency (Fs, e.g., 1000 Hz) if necessary.

- Normalize the signal to zero mean and unit variance.

Epoch Selection:

- Visually inspect and select a 10-30 second artifact-free, stable rhythm segment.

- Avoid segments with catheter movement or far-field interference.

State-Space Reconstruction (for DFA, Lyapunov):

- Use time-delay embedding: For signal x(i), construct state vectors: Y(i) = [x(i), x(i+τ), ..., x(i+(m-1)τ)].

- Estimate delay (τ) using the first minimum of the mutual information function.

- Estimate embedding dimension (m) using the false nearest neighbors method.

Feature Computation:

- Sample Entropy: Use the

entropy.sample_entropyfunction from theantropyPython package. Parameters: m=2, r=0.2 * (signal std. dev.). - Multiscale Entropy: Coarse-grain the time series to scales 1-20. Compute SampEn at each scale.

- DFA: Integrate and detrend signal in windows of varying sizes. Calculate scaling exponent α from the log-log plot of fluctuation vs. window size.

- Lempel-Ziv Complexity: Binarize the signal (values above median = 1, below = 0). Compute normalized LZC using standard algorithm.

- Sample Entropy: Use the

Validation & Statistical Analysis:

- Compute features for a cohort (e.g., n=20 patients per rhythm type).

- Perform Kruskal-Wallis test with post-hoc Dunn's test to identify significant (p<0.05) inter-group differences.

- Use principal component analysis (PCA) to visualize feature separability.

3. The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools

| Item | Function in EGM Complexity Research |

|---|---|

| High-Density Mapping Catheter (e.g., Advisor HD Grid) | Provides dense, spatially coherent EGM data essential for analyzing organizational gradients. |

Open-Source Python Library: antropy |

Provides optimized, clinically validated implementations of SampEn, Permutation Entropy, LZC, and DFA. |

Custom MATLAB lyapunovExponent Script |

Implements Rosenstein's algorithm for estimating the largest Lyapunov exponent from short, noisy EGM data. |

| Clinical EP Database (e.g., CU Ventricular Tachyarrhythmia Database) | Provides validated, annotated EGM/ECG signals for benchmarking new features. |

| Phase Mapping Software Module | Converts voltage-time signals into phase-time signals, enabling analysis of rotor and wavefront dynamics via entropy. |

4. Workflow and Pathway Visualizations

Diagram Title: Non-Linear Feature Extraction & ML Classification Workflow

Diagram Title: Position within Broader EGM Feature Engineering Thesis