Rigorous Testing & Validation in Biomedical Engineering: Essential Methods for Reliable Device Development

This comprehensive guide explores the critical methodologies for testing and validating biomedical engineering devices, from foundational concepts to advanced comparative analysis.

Rigorous Testing & Validation in Biomedical Engineering: Essential Methods for Reliable Device Development

Abstract

This comprehensive guide explores the critical methodologies for testing and validating biomedical engineering devices, from foundational concepts to advanced comparative analysis. Tailored for researchers, scientists, and drug development professionals, it addresses the complete lifecycle: establishing core principles (exploratory), applying specific testing protocols (methodological), resolving common challenges (troubleshooting), and proving safety/efficacy against standards (validation). The article synthesizes current best practices, regulatory frameworks, and technological innovations to equip professionals with a structured approach for developing robust, compliant, and clinically effective medical devices.

Building the Bedrock: Core Principles and Regulatory Frameworks for Device Testing

Technical Support Center: Device Testing & Validation FAQs

This support center addresses common experimental and validation challenges within the context of biomedical device research, aligned with methodologies for rigorous engineering testing.

FAQ 1: Signal Noise in Wearable ECG Data During Motion Artifact Testing Q: Our wearable ECG patch shows significant baseline wander and noise during prescribed motion artifact validation protocols, obscuring ST-segment analysis. A: This is a common issue in dynamic validation. Implement a multi-step filtering and validation workflow.

- Hardware Check: Ensure electrode skin impedance is <2 kΩ at the start of the experiment. Re-prep skin with abrasive gel if higher.

- Software Filtering Protocol:

- Apply a 0.5–40 Hz bandpass Butterworth filter (4th order) to remove baseline wander and high-frequency muscle noise.

- Use an adaptive filter utilizing a simultaneous accelerometer signal (from the device itself) as a reference input to subtract motion artifact.

- Validation Step: After filtering, validate signal integrity by ensuring the amplitude of a simulated 1 mV, 10 Hz calibration signal injected into the circuit is recovered within ±10%.

FAQ 2: Inconsistent Release Kinetics from a Novel Drug-Eluting Implant Coating Q: In vitro elution testing of our therapeutic implant coating shows high coefficient of variance (>15%) between batches in cumulative drug release at 7 days. A: Inconsistent elution points to coating morphology or degradation inconsistencies.

- Protocol Refinement:

- Sink Conditions: Confirm the volume of elution medium (e.g., PBS pH 7.4) is at least 3x the saturation volume of the drug. Agitate at 100 RPM in a controlled 37°C environment.

- Characterization Mandatory: Prior to each elution test, characterize the coating thickness via profilometry at 5 points per sample and the coating porosity via SEM image analysis. Correlate these metrics with the release profile.

- Troubleshooting Table:

| Observation | Possible Root Cause | Corrective Experimental Action |

|---|---|---|

| Fast, erratic release | Coating cracks or poor adhesion | Perform adhesion test (ASTM F2458) and SEM imaging before elution. |

| Slow, variable release | Inconsistent polymer crystallinity or thickness | Standardize solvent evaporation rate during coating and implement strict thickness QC. |

| Burst release varies | Drug aggregation in coating matrix | Implement sonication of drug-polymer solution pre-coating; check for moisture during storage. |

FAQ 3: High False Positive Rate in Optical Lateral Flow Diagnostic Prototype Q: Our rapid diagnostic test strip for Protein X shows false positives in 20% of negative human serum controls when read by our optical reader, though visual read is accurate. A: This indicates a reader calibration or material autofluorescence issue.

- Experimental Protocol for Reader Validation:

- Create a set of 10 negative control strips (with 0 ng/mL Protein X) from the same manufacturing batch.

- Scan each strip with the reader in a dark chamber. Record the raw fluorescence intensity at the test line (T) and control line (C).

- Calculate the mean (μ) and standard deviation (σ) of the T/C ratio for these true negatives.

- Set Threshold: The positivity threshold must be μ + 5σ. Recalibrate reader software accordingly.

- Check Reagents: The nitrocellulose membrane or conjugation pads may exhibit autofluorescence. Test a blank strip (no antibodies) under the reader. If signal is high, source alternative lots with low fluorescence background.

FAQ 4: Accelerated Aging Failure of Implantable Polymer Encapsulation Q: After 6 months of accelerated aging (70°C per ASTM F1980), our implantable device’s polymer sheath shows reduced tensile strength, failing ISO 14708-1 standards. A: Accelerated aging can reveal polymer instability not seen in initial tests.

- Detailed Failure Analysis Protocol:

- FTIR Analysis: Compare aged vs. unaged polymer samples. Look for new oxidation peaks (e.g., carbonyl group ~1700 cm⁻¹).

- DSC Protocol: Run Differential Scanning Calorimetry. Weigh 5-10 mg samples in sealed pans. Heat from -50°C to 300°C at 10°C/min under N₂. Note changes in Glass Transition Temperature (Tg) and melting enthalpy, indicating chain scission or crosslinking.

- Elution Test: Soak aged polymer in simulated body fluid (37°C, 72 hrs) and analyze leachates via HPLC for degradation products.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Testing & Validation |

|---|---|

| Simulated Body Fluid (SBF) | Ionic solution mimicking human blood plasma for in vitro bioactivity and degradation studies of implants. |

| Phosphate-Buffered Saline (PBS) with 0.1% Tween 20 | Standard elution and washing medium for drug release and diagnostic assays; surfactant reduces non-specific binding. |

| Fluorescently-Labeled Albumin (e.g., FITC-BSA) | Model protein for visualizing and quantifying drug delivery carrier uptake, coating uniformity, and fouling. |

| Electrode Impedance Test Gel | Standardized conductive gel for validating the input impedance and performance of diagnostic ECG/EEG electrodes. |

| NIST-Traceable Flow Rate Calibrator | Essential for validating drug infusion pumps and microfluidic diagnostic devices to ensure volumetric accuracy. |

| Standardized Wear Sensor Data Sets (e.g., PPG-DaLiA) | Publicly available, annotated physiological datasets for validating algorithm performance against a benchmark. |

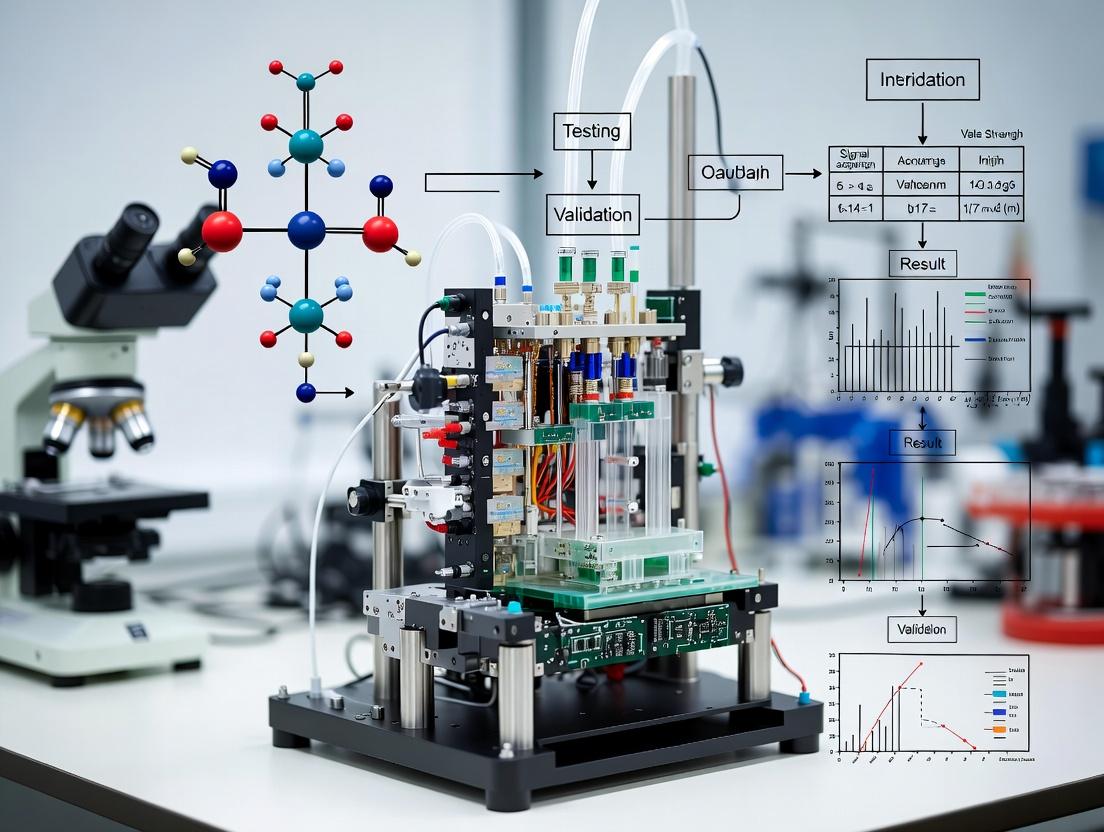

Experimental Workflow & Pathway Diagrams

Device Validation Pathway

Biosignal Processing for Wearable Validation

Technical Support Center: In Vitro Diagnostic (IVD) Device Validation

FAQs & Troubleshooting Guides

Q1: Our microfluidic immunoassay cartridge is showing high inter-assay CV (>20%) for low-concentration analyte targets. What are the primary investigative steps? A: High variability at low concentrations often points to reagent instability or inconsistent fluidic handling.

- Check Reagent Storage & Handling: Confirm aliquots of detection antibodies and enzyme conjugates are single-use, flash-frozen, and stored at -80°C. Avoid freeze-thaw cycles.

- Validate Fluidic Precision: Use a fluorescent dye in the assay buffer. Run 20 cartridges imaging the mixing chamber at a fixed time point. Calculate the CV of pixel intensity. A CV >10% indicates a manufacturing flaw in the pump or valve diaphragm.

- Review Surface Chemistry: High background or uneven spotting during cartridge fabrication can cause variability. Re-validate the blocking protocol (see Experimental Protocol 1).

Q2: During preclinical validation of a continuous glucose monitor (CGM), how do we distinguish sensor drift from true physiological signal? A: Sensor drift is a non-physiological, time-dependent change in signal. Isolate it through a controlled in vitro bench test.

- Protocol: Immerse the CGM sensor in a static, temperature-controlled (37°C) phosphate buffer with a known, stable glucose concentration (e.g., 100 mg/dL) for the intended wear period (e.g., 14 days). Record signal hourly.

- Analysis: Perform linear regression on the recorded signal over time. A significant slope (p<0.05) indicates inherent sensor drift. This drift profile must be subtracted from in vivo data.

- Calibration: Ensure your algorithm uses multiple, staggered in vivo reference measurements (e.g., fingerstick) to correct for both drift and individual bio-variability.

Q3: What are key failure modes for a qPCR-based point-of-care sepsis panel, and how are they controlled? A: Primary failure modes are inhibition, cross-contamination, and thermal cycler performance.

- Inhibition Control: Each cartridge must include an internal control (IC)—a non-competitive synthetic template with primer/probe set in a separate channel. Failure of the IC signal indicates a sample matrix inhibition.

- Cross-contamination: The workflow from sample lysis to amplification must be a closed system. Validate with a high-positive sample adjacent to a no-template control (NTC) in the same instrument run. All NTCs must show no amplification.

- Thermal Uniformity: Perform a spatial temperature verification across the Peltier block using an independent thermal probe. Acceptable variation is ±0.5°C at 95°C and 60°C.

Experimental Protocols

Protocol 1: Validation of Protein Immobilization on a Planar Waveguide Biosensor

- Objective: To quantify the density and activity of capture antibodies immobilized on a sensor surface.

- Methodology:

- Chip Activation: Clean silicon nitride waveguide chips with oxygen plasma. Incubate in 2% (3-aminopropyl)triethoxysilane (APTES) in ethanol for 30 min.

- Cross-linking: Treat with 2.5% glutaraldehyde in PBS for 1 hour.

- Antibody Immobilization: Spot with 1 mg/mL of target capture antibody in phosphate buffer (pH 7.4) for 2 hours.

- Blocking: Incubate in 1% BSA + 0.5% casein in PBS for 12 hours at 4°C.

- Quantification (Fluorescent Method): Incubate with a fluorescently-labeled anti-species IgG (e.g., Alexa Fluor 647) at a known concentration. Image with a calibrated fluorescence scanner. Compare to a standard curve of the same antibody printed at known densities.

- Success Criterion: Immobilization density > 5000 molecules/μm² with a spatial CV < 15%.

Protocol 2: Fatigue Testing of a Percutaneous Lead for a Neuromodulation Device

- Objective: To simulate years of mechanical stress from patient movement.

- Methodology:

- Fixture Setup: Secure the lead’s proximal end in a fixed clamp. The distal electrode segment is clamped to a linear actuator.

- Motion Profile: Program the actuator to induce a combination of flexion (±30°) and torsion (±90°) cycles at a frequency of 2 Hz.

- Monitoring: Conduct the test in a 37°C saline bath. Perform continuous electrical impedance monitoring for open or short circuits.

- Endpoint: Test is run to 10 million cycles (simulating ~10 years) or until failure (impedance change >50% or complete fracture).

- Analysis: Plot impedance vs. cycle count. Perform post-test SEM imaging on fracture points.

Table 1: Performance Comparison of Clinical Chemistry Analyzer Assays

| Analyte | CLIA Allowable Error | Observed Total Error | Precision (CV%) | Accuracy (Bias %) |

|---|---|---|---|---|

| Serum Na+ | ±4 mmol/L | 1.2 mmol/L | 0.4% | 0.8% |

| Blood Glucose | ±10% or 6 mg/dL | 4.2% | 1.8% | 2.1% |

| Troponin I | ±30% (at 99th %ile) | 12.5% | 5.2% (at LoD) | -3.8% |

Table 2: Failure Mode and Effects Analysis (FMEA) for a Syringe Pump Driver

| Component | Potential Failure Mode | Effect | Severity (1-10) | Occurrence (1-10) | Detection (1-10) | RPN |

|---|---|---|---|---|---|---|

| Stepper Motor | Step loss under high load | Under-dosing | 9 | 3 | 2 | 54 |

| Lead Screw | Backlash | Volume inaccuracy | 7 | 4 | 3 | 84 |

| Optical Sensor | Dust contamination | Failure to home | 4 | 5 | 1 | 20 |

Visualizations

Diagram 1: IVD Device Verification Workflow

Diagram 2: Key Signaling Pathway in Sepsis Immunoassay

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Material | Function in Device Validation | Key Consideration |

|---|---|---|

| NIST-traceable Calibrators | Provides absolute reference for analytical accuracy. Essential for establishing the measurement traceability chain. | Verify commutability with patient samples across the device's measuring interval. |

| Human Serum Panels | Characterize assay performance across diverse genetic, disease, and interferent matrices. | Must be ethically sourced, IRB-approved. Include samples with common interferents (bilirubin, lipids, hemoglobin). |

| Stable Cell Lines | For functional assays (e.g., cytokine release). Provide a consistent biological response to stimulus. | Ensure mycoplasma-free status and authenticate cell lines (STR profiling) regularly. |

| Functionalized Nanoparticles | Used as signal amplifiers in lateral flow or chemiluminescence assays (e.g., gold, latex, magnetic). | Consistency in conjugate size, surface charge, and binding capacity is critical for lot-to-lot reproducibility. |

| Synthetic Biomimetic Fluids | Simulates blood, interstitial fluid, or saliva for sterile, reproducible benchtop durability testing. | Must match key physicochemical properties (viscosity, pH, ionic strength, surface tension). |

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: Our electrical safety test for IEC 60601-1 compliance is failing on leakage current measurements. What are the most common root causes and corrective actions?

- Answer: High leakage current failures typically stem from inadequate insulation, component degradation, or improper grounding. Follow this protocol:

- Isolate the Circuit: Disconnect the device under test (DUT) and measure leakage from each part (applied part, enclosure, mains part) separately using a calibrated leakage current tester.

- Inspect Insulation: Check for insufficient creepage and clearance distances, especially in power supplies and transformers. Verify dielectric strength test results.

- Check Y-Capacitors: Evaluate filtering Y-capacitors bridging the primary and secondary circuits; their value and placement are critical.

- Verify Grounding: Ensure protective earth connection integrity has a resistance of <0.1Ω.

- Environmental Factors: Retest under high humidity conditions (93% RH) as per standard, as moisture can significantly increase leakage.

FAQ 2: During design validation per FDA 21 CFR 820.30, our failure modes and effects analysis (FMEA) is not effectively predicting field failures. How can we improve its rigor?

- Answer: Ineffective FMEAs often lack appropriate severity, occurrence, and detection rankings based on real data. Implement this enhanced protocol:

- Severity (S): Base rankings on clinical harm data from post-market surveillance of predicate devices, not just engineering judgement.

- Occurrence (O): Use component-level reliability data (e.g., MTBF from MIL-HDBK-217F or field return data) to quantify probability. For new components, use accelerated life testing data.

- Detection (D): Rank based on the validated statistical power of your verification test (e.g., sample size justification showing 95% confidence to detect the failure mode).

- Action Threshold: Mandate risk mitigation actions for any Risk Priority Number (RPN) > 100 and any individual severity rating ≥ 8 (on a 1-10 scale).

FAQ 3: Our technical file for EMA MDR compliance is being challenged for insufficient clinical evaluation. What specific evidence linkages are required?

- Answer: The EMA/MDR requires a clear, traceable route from clinical data to claims. Follow this evaluation protocol:

- State of the Art (SOTA) Analysis: Create a comparator table of your device and 3+ predicate devices on the EU market, comparing materials, energy source, principle of operation, and intended purpose.

- Equivalent Device Justification: If claiming equivalence to a predicate for data access, provide proof of technical, biological, and clinical equivalence with less than 10% divergence in any key parameter.

- Literature Review Protocol: Perform a systematic review per PRISMA guidelines. Document the search strategy (databases, keywords, inclusion/exclusion criteria) and perform a critical appraisal of each study using a tool like CASP.

- Residual Risk-Benefit Profile: Create a trace matrix linking all identified hazards (from risk management file), associated clinical outcomes, the benefit of the device for the target population, and the post-market surveillance plan to monitor unresolved risks.

Quantitative Data Summary: Key Regulatory Testing Parameters

| Regulatory Standard / Test | Key Quantitative Parameter | Typical Acceptance Criteria | Common Test Standard Reference |

|---|---|---|---|

| IEC 60601-1 (Electrical Safety) | Patient Leakage Current (NC) | < 100 µA (CF-type equipment) | IEC 60601-1, Clause 8 |

| IEC 60601-1-2 (EMC) | Radiated Immunity | 3 V/m, 80 MHz - 2.7 GHz | IEC 61000-4-3 |

| ISO 10993-5 (Biocompatibility) | Cytotoxicity (Elution Method) | Cell viability ≥ 70% | MTT or XTT Assay |

| ISO 11608 (Needle-Based Systems) | Dose Accuracy | Mean ± 5% of nominal dose | ISO 11608-1 |

| FDA Software Validation | Defect Detection Rate (for SOUP) | > 99% for major faults | IEC 62304, Annex B |

Experimental Protocol: Biocompatibility Assessment per ISO 10993-5

Title: In Vitro Cytotoxicity Testing via Extract Elution Method

- Sample Preparation: Prepare an extract using the device material or a representative sample. Use both a polar solvent (e.g., cell culture medium with serum) and a non-polar solvent (e.g., DMSO) as per ISO 10993-12. Use a surface area-to-volume ratio of 3 cm²/mL or 0.1 g/mL. Incubate at 37°C for 24±2 hours.

- Cell Culture: Use L-929 mouse fibroblast cells. Culture in RPMI 1640 medium with 10% fetal bovine serum at 37°C in a 5% CO₂ incubator.

- Exposure: Plate cells in a 96-well plate at a density of 1 x 10⁴ cells/well. Incubate for 24 hours to form a sub-confluent monolayer. Replace growth medium with 100 µL of the device extract. Include a negative control (high-density polyethylene) and a positive control (latex or 0.5% phenol solution). Use 6 replicate wells per sample.

- Incubation: Incubate cells with extract for 24±2 hours.

- Viability Assessment: Perform MTT assay. Add 10 µL of MTT reagent (5 mg/mL) to each well. Incubate for 2-4 hours. Remove medium and add 100 µL of solubilization solution (e.g., isopropanol). Shake gently.

- Data Analysis: Measure absorbance at 570 nm using a microplate reader. Calculate percent cell viability relative to the negative control. A reduction in viability by >30% (i.e., <70% viability) indicates a cytotoxic potential.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Biomedical Device Testing |

|---|---|

| L-929 Mouse Fibroblast Cells | Standardized cell line for in vitro cytotoxicity testing per ISO 10993-5. |

| MTT/XTT Reagent Kit | Colorimetric assay for quantifying cell viability and proliferation. |

| Defibrinated Sheep Blood | Used for hemocompatibility testing (hemolysis) per ISO 10993-4. |

| PyroGene Recombinant Factor C Assay | Endotoxin detection reagent for LAL testing, replacing horseshoe crab lysate. |

| Fluorescein Sodium Salt | Tracer agent for validating drug delivery device dose accuracy and spray patterns. |

| ASTM F2503 Non-MRI Conditional Marker | Passive implant marker for safety testing of devices in MRI environments. |

Diagram 1: Integrated Regulatory Strategy Workflow

Diagram 2: FMEA Risk Control Verification Logic

Technical Support Center: Troubleshooting Guides & FAQs

FAQ 1: Our device verification test (e.g., software unit test) passed, but the subsequent validation study with clinicians failed. How do we resolve this disconnect? Answer: This indicates a potential gap between building the device right (verification) and building the right device (user needs). Troubleshooting steps:

- Trace Requirements: Re-audit your validation failure points against the User Needs and Design Inputs. A common root cause is ambiguous or incomplete design inputs.

- Review Risk Management File: Check your ISO 14971 Risk Management File. Was this specific use scenario identified as a hazard? If not, update your hazard analysis and risk control measures.

- Revisit Validation Protocol: Ensure your validation protocol accurately simulates real-world use, including user training levels and environmental factors.

- Protocol (Root Cause Analysis):

- Step 1: Form a cross-functional team (R&D, Clinical, QA).

- Step 2: Map the specific validation failure to the traced design input.

- Step 3: Conduct a 5-Whys analysis to determine if the cause was a verification shortfall (e.g., test coverage) or a requirement definition error.

- Step 4: Update the Risk Management File with new or revised hazardous situations and severity/probability estimates.

FAQ 2: During process qualification (PQ), we are observing unacceptable variation in a critical coating thickness. What is the systematic approach to resolve this? Answer: Process variation in PQ suggests the process is not in a state of control. Follow this guide:

- Immediate Containment: Segregate any affected batches.

- Analyze Equipment Qualification (IQ/OQ): Verify that the coating equipment's installation and operational qualifications are documented and that all equipment is operating within specified parameters (e.g., spray pressure, nozzle speed, environmental controls).

- Check Material Consistency: Review the incoming inspection records for the coating reagent. Perform a Gage R&R (Repeatability & Reproducibility) study on the measurement system itself to ensure the variation is not from the measurement tool.

- Protocol (Gage R&R Study):

- Step 1: Select 10 representative samples covering the expected thickness range.

- Step 2: Have 3 trained operators measure each sample 3 times in random order.

- Step 3: Use ANOVA analysis to calculate variation components: equipment variation, appraiser variation, and part-to-part variation.

- Step 4: If the measurement system variation exceeds 10% of the total process variation, the measurement system must be improved before further PQ.

FAQ 3: How do we integrate ISO 14971 risk management activities with design verification and validation (V&V) timelines? Answer: Risk management is not a one-time activity. It must be iterative and drive V&V planning. A common error is performing risk analysis after V&V.

- Integration Workflow:

- Risk Analysis: Identify hazards and hazardous situations before finalizing design inputs.

- Risk Control: Define risk control measures (e.g., alarm, hardware design, protective packaging). These measures become specific design inputs and verification items.

- Verification: Execute tests to prove risk control measures are implemented correctly (verification of design outputs).

- Validation: Confirm that residual risk is acceptable and that risk controls are effective in the hands of the user.

Visualization: Risk Management & V&V Integration Workflow

Diagram Title: Integration of Risk Management with Design V&V

Table 1: Core Definitions in Biomedical Device Testing

| Term | ISO Definition Context | Core Question Answered | Primary Objective |

|---|---|---|---|

| Verification | Confirmation, through provision of objective evidence, that specified requirements have been fulfilled. (ISO 9000) | "Did we build the device right?" | Ensure design outputs meet design input specifications. |

| Validation | Confirmation, through provision of objective evidence, that the requirements for a specific intended use or application have been fulfilled. (ISO 9000) | "Did we build the right device?" | Ensure the device meets user needs and intended uses in its operational environment. |

| Qualification | Process of demonstrating whether an entity is capable of fulfilling specified requirements. Often applied to processes, equipment, or systems. | "Is the system/process ready and capable?" | Establish confidence that supporting processes (e.g., manufacturing, software) consistently produce correct results. |

| Risk Management (ISO 14971) | Systematic application of management policies, procedures, and practices to the tasks of analysis, evaluation, control, and monitoring of risk. | "Is the device safe enough?" | Identify hazards, estimate/evaluate associated risks, and control these risks to an acceptable level. |

Table 2: Typical Quantitative Outputs from Key Activities

| Activity | Example Quantitative Metrics | Acceptability Criteria (Example) |

|---|---|---|

| Design Verification | Software code coverage: 95%, Mechanical tensile strength: >50 N, Measurement accuracy: ±2% FS | Meets pre-defined design input specification limits. |

| Process Qualification (PQ) | Process Capability Index (Cpk): 1.33, Batch yield: 99.8%, Coating thickness uniformity: RSD <5% | Demonstrates statistical stability and capability over multiple runs. |

| Risk Evaluation | Risk Priority Number (RPN): Severity (1-5) x Occurrence (1-5) x Detection (1-5). Hazard Severity: Major. | Residual risk is acceptable per policy; RPN below pre-defined threshold after controls. |

The Scientist's Toolkit: Research Reagent Solutions for Validation Testing

Table 3: Essential Materials for Biocompatibility & Performance Validation

| Item / Reagent Solution | Function in Validation Testing |

|---|---|

| Cytotoxicity Assay Kit (e.g., MTT, XTT) | Quantifies cellular metabolic activity to assess the potential toxic effect of device extracts per ISO 10993-5. |

| Pyrogen Test Reagents (LAL/TAL) | Detects endotoxins from gram-negative bacteria as part of sterility and pyrogenicity validation per ISO 10993-11. |

| Simulated Use Fluids (e.g., PBS, Synthetic Blood) | Provides a standardized, consistent medium for in vitro performance testing under physiologically relevant conditions. |

| Wear & Fatigue Test Standards (e.g., UHMWPE rods, ISO 14242) | Standardized counterfaces and protocols for validating the durability of implantable bearing surfaces. |

| Reference Sensors & Calibration Standards | Traceable calibration tools (e.g., known weight, pressure, voltage) to validate the accuracy of device measurement systems. |

Technical Support Center: Troubleshooting Biomedical Device Experiments

This support center, framed within a thesis on Biomedical Engineering Device Testing and Validation Methods, provides targeted guidance for researchers and drug development professionals encountering experimental challenges during the device development lifecycle.

FAQs & Troubleshooting Guides

Q1: During in vitro cytotoxicity testing per ISO 10993-5, our polymeric device extract is causing high LDH release but low mitochondrial activity (MTT assay). What does this discrepancy indicate? A: This pattern suggests a specific cytotoxic mechanism. High LDH indicates acute cell membrane damage and necrosis. Concurrently low MTT signal, which measures mitochondrial reductase activity, points to rapid metabolic shutdown or interference. Troubleshooting Steps:

- Assay Interference: Test your device extract directly in the MTT assay without cells. Some materials can reduce MTT tetrazolium salts directly, causing false lows, or absorb the formazan product.

- Time-Course Analysis: Perform LDH and MTT assays at multiple time points (e.g., 6, 24, 48h). The disparity may resolve if cells recover or if necrosis is a late event.

- Mechanistic Investigation: Supplement with a Caspase-3/7 activity assay to rule out early apoptosis, which would show elevated caspases with initially intact membranes (low LDH).

Q2: Our electrochemical biosensor shows signal drift and decreased sensitivity during accelerated shelf-life testing. What are the primary failure modes to investigate? A: Signal drift in biosensors often relates to bioreceptor degradation or electrode fouling. Systematic Investigation Protocol:

- Characterize Electrode Surface: Use Electrochemical Impedance Spectroscopy (EIS) to monitor changes in charge transfer resistance (Rct) over time. A rising Rct suggests passive layer formation.

- Test Bioreceptor Activity Independently: If possible, elute immobilized antibodies or enzymes and test activity in solution (e.g., via ELISA or kinetic colorimetric assay) to isolate the failure to the bioreceptor vs. the transducer.

- Environmental Factor Correlation: Correlate signal loss with temperature and humidity logs. Moisture ingress is a common culprit for polymer matrix swelling or delamination.

Q3: In a large-animal (porcine) hemodynamic study for a vascular graft, we observe anomalous pressure gradients not correlating with imaging data. How do we isolate the measurement error? A: Discrepancy between direct pressure measurements and imaging (e.g., Doppler ultrasound) requires a calibration check of the entire data acquisition chain. Experimental Verification Workflow:

- In-line Pressure Transducer Calibration: Before the next experiment, perform a static calibration against a mercury column or certified digital manometer at 37°C in saline.

- Simultaneous Measurement Audit: Place two identical, freshly calibrated transducers in series within the circuit. Divergent readings indicate a transducer-specific fault.

- Anatomical Validation: Post-mortem, perfuse the explained graft at a known flow rate using a calibrated pump and measure pressure drop ex vivo to decouple measurement error from physiological responses (e.g., vasospasm).

Quantitative Data Summary

Table 1: Common *In Vitro Test Failure Rates & Root Causes (Synthesized from Recent Studies)*

| Test Type (Standard) | Typical Failure Rate Range | Most Frequent Root Cause (≥40% of cases) |

|---|---|---|

| Cytotoxicity (ISO 10993-5) | 10-15% | Leachable compounds (plasticizers, monomers, stabilizers) |

| Hemocompatibility (ISO 10993-4) | 15-25% | Surface roughness/ topography leading to platelet adhesion |

| Accelerated Aging (ISO 11607) | 5-20% | Polymer oxidation or packaging seal integrity breach |

Table 2: Key Performance Indicators (KPIs) for Sensor Validation Phases

| Development Phase | Key Metric | Target Threshold (Example) | Measurement Protocol Reference |

|---|---|---|---|

| Proof-of-Concept | Limit of Detection (LoD) | ≤ 0.1 nM analytic in serum | CLSI EP17-A2 (10 replicates of blank) |

| Preclinical Verification | Intra-assay Precision (CV) | < 15% across working range | CLSI EP05-A3 (20 replicates, 3 levels) |

| Clinical Validation | Sensitivity/Specificity | > 95% vs. gold-standard assay | CLSI EP12-A2 (N ≥ 100 clinical samples) |

Experimental Protocols

Protocol: Testing for Autoclave-Induced Material Degradation Purpose: To validate that a polymer component maintains critical mechanical properties after repeated sterilization cycles. Methodology:

- Sample Preparation: Prepare N≥30 test coupons per ASTM D638 (tensile) or D790 (flexural). Divide into 3 groups: Control (no sterilization), 1x cycle, 5x cycles.

- Sterilization: Steam sterilize per ASTM F1886 (Standard Guide for Sterilization of Implants). Typical cycle: 121°C, 15 psi, 30 minutes. Allow 24-hour recovery at 23±2°C, 50±5% RH.

- Mechanical Testing: Perform tensile/flexural testing on a calibrated universal testing machine. Record yield strength, ultimate tensile strength, and modulus of elasticity.

- Statistical Analysis: Perform one-way ANOVA with Tukey's post-hoc test (α=0.05). A significant decrease (>10%) in mean mechanical properties in the 5x cycle group indicates susceptibility.

Protocol: Surface Characterization for Hydrophilicity Change Purpose: To quantify changes in surface wettability after plasma treatment, a common step for enhancing biocompatibility. Methodology:

- Sample Handling: Use gloves and non-contact tools. Clean samples ultrasonically in isopropanol for 10 minutes.

- Measurement: Use a contact angle goniometer. Place a 3µL droplet of Type I water on the surface. Capture image at 0.5 seconds post-dispense.

- Data Collection: Measure left and right contact angles using Young-Laplace fitting. Take 5 measurements per sample, 3 samples per group.

- Timeline: Measure immediately after treatment, then at 1 hour, 24 hours, and 7 days post-treatment (stored in ambient air) to assess "hydrophobic recovery."

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomedical Device Surface Modification & Testing

| Item Name | Function/Application | Key Consideration for Validation |

|---|---|---|

| Phosphate Buffered Saline (PBS), ISO Grade | Baseline extraction medium for leachable studies and assay diluent. | Must be certified endotoxin-free (<0.25 EU/mL) and have documented elemental impurities. |

| AlamarBlue / Resazurin Cell Viability Reagent | Fluorescent indicator of metabolic activity for real-time, non-destructive cytotoxicity monitoring. | Pre-test for direct interaction with device materials; establish linear range for your cell type. |

| Polydimethylsiloxane (PDMS) Silicone Elastomer Kit | For creating microfluidic models or soft tissue simulants in proof-of-concept devices. | Cure time and temperature affect mechanical properties; document process parameters rigorously. |

| Fibronectin, Human Plasma-Derived | Protein coating to promote cell adhesion on implant surfaces for in vitro biocompatibility assays. | Batch-to-batch variability can affect cell response; use the same source/batch for a study series. |

| Nucleic Acid Intercalating Dye (e.g., Propidium Iodide) | Membrane-impermeant stain to identify necrotic cells in flow cytometry or fluorescence microscopy. | Photosensitive and potentially mutagenic; requires careful handling and waste disposal protocols. |

Ethical Considerations in Pre-Clinical and Clinical Device Testing

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During in vivo biocompatibility testing, we observe an unexpected chronic inflammatory response beyond 12 weeks. What are the primary investigative steps?

A: This indicates a potential failure in the device's long-term biocompatibility. Follow this protocol:

- Histopathological Analysis: Perform a detailed histological assessment of the implant-tissue interface using H&E staining. Grade the response using the ISO 10993-6 scoring system for inflammation, neovascularization, fibrosis, and tissue necrosis.

- Material Degradation Analysis: Retrieve the device and analyze for:

- Unexpected Degradation: Use SEM/EDS to examine surface pitting, cracking, or corrosion.

- Leachable Profile Re-testing: Conduct GC-MS or HPLC on explanted device extracts to identify any new breakdown products not present in initial ISO 10993-17 chemical characterization.

- Control Review: Re-examine negative control sites to rule out systemic or procedural causes.

Q2: Our hemodynamic sensor shows significant signal drift during chronic animal implant studies. How do we isolate the cause?

A: Signal drift in chronic implants can be biofouling or electronic. Execute this isolation workflow:

Experimental Protocol: In Vitro Drift Simulation

- Objective: Reproduce drift in a controlled bioreactor to decouple biological from electronic factors.

- Method:

- Place duplicate sensors in two parallel flow circuits.

- Circuit A (Test): Perfuse with simulated body fluid (SBF) at 37°C.

- Circuit B (Control): Perfuse with inert saline at 37°C.

- Apply identical, cyclically varying pressure waveforms to both circuits for 28 days.

- Record baseline, 7-day, 14-day, and 28-day calibration metrics (zero offset, sensitivity, linearity).

- Interpretation: Drift in Circuit A only suggests biofouling. Drift in both circuits indicates inherent sensor instability.

Q3: We are preparing an IDE application. What are the most common ethical deficiencies flagged by IRBs in early feasibility study protocols?

A: Based on recent FDA feedback summaries, common deficiencies involve subject protection and data validity:

| Deficiency Category | Specific Issue | Recommended Correction |

|---|---|---|

| Risk-Benefit Analysis | Overstatement of potential direct benefit to subjects in early-stage, high-risk studies. | Clearly state the study is for device development; any benefit is speculative. Justify risks by the importance of the knowledge gained. |

| Subject Selection | Inclusion criteria are too broad, potentially exposing lower-risk patients to disproportionate risk. | Tighten criteria to enroll only those with severe disease refractory to all standard options. |

| Monitoring & Stopping Rules | Lack of explicit, data-driven criteria for pausing enrollment. | Define objective performance criteria (e.g., SAE rate > X%) that trigger immediate review by the Data Monitoring Committee. |

Q4: How do we ethically justify the sample size for a first-in-human pilot study when no clinical data exists?

A: Justification must be based on technical, not statistical, goals. Provide a clear "learning objective" rationale.

- Typical Sample Size Range: 10-15 subjects for initial pilot/feasibility studies.

- Ethical Justification Framework:

- The sample is the minimum necessary to assess initial device function and safety parameters (e.g., ability to take measurements, acute procedural safety).

- Explicitly state the study is not powered for statistical hypothesis testing.

- Pre-specify the key safety and performance observations (e.g., "successful deployment in 90% of attempts") that will inform the design of the subsequent pivotal study.

Experimental Protocols

Protocol: ISO 10993-5 In Vitro Cytotoxicity Testing (MTT Assay) This is a critical pre-screening test to ethically reduce animal use.

- Extract Preparation: Incubate device material (or a representative sample) in cell culture medium (e.g., MEM with serum) at a surface area-to-volume ratio of 3 cm²/mL for 24±2 hrs at 37°C.

- Cell Culture: Seed L-929 fibroblast cells in a 96-well plate at a density of 1 x 10⁴ cells/well. Incubate for 24 hrs to form a sub-confluent monolayer.

- Exposure: Replace medium in test wells with 100µL of device extract. Include:

- Negative Control: Culture medium only.

- Positive Control: 2% Phenol solution.

- Incubation: Incubate cells with extract for 48±2 hrs.

- Viability Assessment: Add 10µL of MTT reagent to each well. Incubate for 4 hrs. Remove medium and add 100µL of solubilization solution. Shake gently.

- Analysis: Measure absorbance at 570 nm (reference 650 nm). Calculate cell viability:

Viability (%) = (Absorbance of Test / Absorbance of Negative Control) x 100 - Acceptance Criterion: Viability ≥ 70% is required per ISO 10993-5.

Protocol: GLP-compliant In Vivo Safety and Performance Study (Chronic Implant) A template for large animal studies.

- Objective: Evaluate the 90-day functional safety and performance of an implantable glucose sensor.

- Animal Model: N=20 purpose-bred Yorkshire swine, split into Test (n=15) and Sham Control (n=5) groups.

- Pre-op: Acclimatize animals for 14 days. Perform baseline clinical pathology (hematology, clinical chemistry).

- Surgery (Test Group): Under general anesthesia and aseptic technique, implant the sensor in the jugular vein, tunneling the transmitter to a subcutaneous pocket on the back.

- Surgery (Sham Control): Perform identical surgical dissection and vessel exposure without device implantation.

- Post-op Monitoring: BID observations for 7 days, then daily. Assess incision sites, body weight, food consumption, and behavior.

- Terminal Procedure (Day 91): Euthanize via barbiturate overdose. Perform:

- Gross Necropsy: Document implant site and all major organs.

- Histopathology: Collect and preserve tissue at implant site and distal organs (heart, lung, liver, kidney, spleen). Process for H&E and special stains (e.g., Masson's Trichrome for fibrosis).

- Device Retrieval: Explant device and analyze for biofouling and structural integrity.

Visualizations

Title: Chronic Inflammation Troubleshooting Workflow

Title: Ethical & Regulatory Testing Pathways for Medical Devices

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Device Testing |

|---|---|

| Simulated Body Fluid (SBF) | An in vitro solution with ion concentrations similar to human blood plasma. Used to assess bioactivity and degradation of implant materials. |

| L-929 Mouse Fibroblast Cell Line | The standard cell line specified in ISO 10993-5 for cytotoxicity testing of medical devices and materials. |

| MTT Reagent (3-(4,5-Dimethylthiazol-2-yl)-2,5-Diphenyltetrazolium Bromide) | A yellow tetrazole reduced to purple formazan in metabolically active cells. Used to quantify cell viability in cytotoxicity assays. |

| Formalin-Fixed Paraffin-Embedded (FFPE) Tissue Blocks | The standard method for preserving and preparing explanted tissue with the implant interface for sectioning, staining, and histopathological analysis. |

| Good Laboratory Practice (GLP) Compliance Kits | Audited reagent sets (e.g., for clinical pathology, hematology) with full traceability, required for animal studies intended for regulatory submission. |

| Programmable Bioreactor System | Enables in vitro durability, fatigue, and drift testing of devices under simulated physiological conditions (pressure, flow, temperature). |

From Bench to Bedside: A Practical Guide to Core Testing Methodologies

Technical Support Center: Troubleshooting & FAQs

Mechanical Characterization

Q1: During uniaxial tensile testing of a polymer stent, the stress-strain curve shows unexpected yielding and a lower Young's modulus than literature values. What could be the cause? A: This is often due to improper sample mounting, grip slippage, or an incorrect strain rate. Ensure the sample is aligned axially within the grips to avoid bending moments. Use sandpaper or specialized pneumatic grips to prevent slippage. For polymers, the strain rate must be standardized (e.g., 1 mm/min per ASTM D638); a faster rate can overestimate modulus. Also, precondition the sample with 3-5 loading cycles to 2% strain to minimize the Mullins effect.

Q2: How do I address inconsistent results in cyclic fatigue testing of a nitinol heart valve frame? A: Inconsistency typically stems from inadequate control of the testing environment or improper calibration of mean strain. Ensure the test is conducted in a temperature-controlled saline bath (37±0.5°C) to simulate physiologic conditions and manage self-heating of the metal. Use an extensometer, not crosshead displacement, to set and monitor the mean and alternating strain accurately. Implement periodic calibration of the load cell at the low forces typical for fatigue cycles.

Electrical Characterization

Q3: My electrochemical impedance spectroscopy (EIS) data for a biosensor coating shows a depressed, non-ideal semicircle. Is my coating defective? A: Not necessarily. A depressed semicircle often indicates constant phase element (CPE) behavior, which is common in non-homogeneous, rough, or porous surfaces—typical of many biocompatible coatings. Analyze the data using a modified Randles circuit with a CPE instead of a pure capacitor. Ensure your reference electrode is stable and the electrolyte (e.g., PBS) is fresh and de-aerated to minimize artifacts.

Q4: When measuring the conductivity of a hydrogel, the values drift downward over time. What is the troubleshooting step? A: This is likely due to electrode polarization or drying of the hydrogel. Use a 4-point probe (Kelvin) method to eliminate contact resistance effects. For long-term measurements, ensure the sample chamber is sealed with a vapor barrier (e.g., parafilm) and maintained at 100% humidity. Apply a protective dielectric coating like silicone grease to the exposed edges to prevent ionic leakage and evaporation.

Material & Surface Characterization

Q5: Atomic Force Microscopy (AFM) scans of a drug-eluting coating reveal artifacts that look like "doubled" features. A: This is a classic scanner calibration or feedback loop issue. First, calibrate the AFM scanner using a standard grating (e.g., 1 μm pitch). Reduce the scan rate (e.g., to 0.5-1 Hz) to allow the feedback loop to track the surface properly. Check for loose components or contaminants on the tip or sample. For soft materials, ensure you are using a non-contact or tapping mode with an appropriate soft cantilever (low spring constant, e.g., 0.1-5 N/m).

Q6: Contact angle measurements for wettability assessment are highly variable on the same substrate. A: Variability arises from surface contamination, inconsistent droplet volume, or ambient conditions. Always clean the substrate rigorously (UV-ozone, plasma treatment) immediately before testing. Use an automated dispensing system with a fixed syringe size to ensure consistent droplet volume (typically 2-5 µL). Perform measurements in a closed environmental chamber to control temperature and humidity, and record the measurement within 3 seconds of droplet deposition.

Key Experimental Protocols

Protocol 1: Biaxial Fatigue Testing of a Synthetic Vascular Graft

Objective: To simulate physiologic pulsatile loading and assess material durability. Method:

- Sample Preparation: Cut graft material into 25 mm x 25 mm squares. Mount onto a biaxial testing system with suture loops or specialized biocompatible clamps at each edge.

- Environmental Control: Submerge in phosphate-buffered saline (PBS) at 37°C.

- Loading Profile:

- Apply cyclic circumferential strain (representing arterial diametral change) with a sine wave, 1 Hz frequency, 5-10% strain amplitude.

- Simultaneously apply a static axial pre-strain of 5% (mimicking in-vivo tethering).

- Data Collection: Record force in both axes for 10 million cycles or until failure. Monitor for permanent set, thinning, or cracking via periodic optical microscopy.

- Analysis: Plot S-N (stress-cycle) curve. Use scanning electron microscopy (SEM) for post-hoc fracture analysis.

Protocol 2: Electrochemical Characterization of a Neural Electrode Coating

Objective: To evaluate charge storage capacity (CSC) and charge injection limit (CIL). Method:

- Setup: Use a standard 3-electrode cell in 0.9% NaCl. The working electrode is the coated device, a Pt mesh is the counter electrode, and an Ag/AgCl (sat. KCl) is the reference.

- Cyclic Voltammetry (CV): Sweep potential between water window limits (-0.6V to +0.8V vs. Ag/AgCl) at a scan rate of 50 mV/s. Repeat until curves stabilize (typically 20 cycles).

- Calculation: CSC (mC/cm²) is calculated by integrating the cathodic or anodic current over time and dividing by the scan rate and geometric area.

- Voltage Transient (VT) Testing: Use a biphasic, current-controlled pulse (0.2 ms phase, 1 mA amplitude). Measure the resulting interphase voltage.

- Analysis: CIL is the current amplitude where the access voltage (Va) stays below the water window limit. The protocol is critical for validating safe stimulation parameters.

Summarized Quantitative Data

Table 1: Representative Mechanical Properties of Biomaterials

| Material | Application | Young's Modulus (GPa) | Ultimate Tensile Strength (MPa) | Strain at Failure (%) | Test Standard |

|---|---|---|---|---|---|

| Medical Grade PEEK | Spinal Implant | 3.6 - 4.2 | 90 - 100 | 20 - 30 | ASTM D638 |

| 316L Stainless Steel | Stent | 190 - 210 | 540 - 620 | 40 - 50 | ASTM E8/E8M |

| Nitinol (Superelastic) | Guidewire | 50 - 60 (Austenite) | 900 - 1200 | 10 - 15 | ASTM F2516 |

| Collagen Hydrogel | Tissue Scaffold | 0.0005 - 0.005 | 0.01 - 0.05 | 50 - 200 | N/A (Custom) |

Table 2: Typical Electrochemical Performance Metrics for Coatings

| Coating Type | Charge Storage Capacity (CSC) mC/cm² | Electrochemical Impedance at 1kHz (kΩ) | Charge Injection Limit (CIL) mC/cm² | Key Benefit |

|---|---|---|---|---|

| Sputtered Iridium Oxide (SIROF) | 40 - 80 | 1 - 5 | 1.5 - 3.5 | High CSC, Excellent Stability |

| PEDOT:PSS (Conductive Polymer) | 100 - 200 | 0.5 - 2 | 0.8 - 1.5 | Very Low Impedance, Soft |

| Titanium Nitride (TiN) | 10 - 30 | 5 - 15 | 0.3 - 0.8 | Extreme Durability |

| Activated Iridium (AIROF) | 20 - 40 | 2 - 10 | 1.0 - 2.0 | High Catalytic Activity |

Diagrams

Title: Mechanical Fatigue Testing Workflow

Title: EIS Data Troubleshooting Decision Tree

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for In-Vitro Characterization

| Item | Function/Application | Key Consideration |

|---|---|---|

| Phosphate Buffered Saline (PBS), pH 7.4 | Standard electrolyte for simulating physiologic ionic environment in electrochemical and corrosion tests. | Use calcium/magnesium-free for long-term immersion to prevent precipitate formation. |

| Polydimethylsiloxane (PDMS) Sylgard 184 | For creating microfluidic chambers, soft actuator membranes, or mounting samples. | Thorough degassing before curing and precise 10:1 base:curing agent ratio for consistent elasticity. |

| Fibronectin or Collagen Type I Solution | Protein coating for cell culture studies on test substrates to ensure cell adhesion. | Aliquot to avoid freeze-thaw cycles; coat surfaces under sterile conditions for biocompatibility tests. |

| Conductive Silver Epoxy | Attaching leads to small or irregularly shaped samples for electrical measurements. | Requires low-temperature cure (60-80°C) to avoid damaging temperature-sensitive polymers/biomaterials. |

| Nanoindenter Calibration Standard (Fused Silica) | For calibrating hardness and modulus measurement systems (AFM, nanoindenter). | Must be cleaned with acetone and ethanol before each use to maintain a pristine, known surface. |

| Potentiostat/Galvanostat Electrolyte (e.g., 0.9% NaCl or Simulated Body Fluid) | For all electrochemical testing (EIS, CV, corrosion). | Must be freshly prepared and deaerated with nitrogen for 15 mins prior to corrosion potential tests. |

In-Silico Modeling and Simulation (FEA, CFD) for Predictive Performance Analysis

Technical Support Center: Troubleshooting Guides & FAQs

Q1: In our FEA of a coronary stent, the mesh refinement study is not converging. Von Mises stress values keep increasing with a finer mesh. What is the issue and how do we resolve it? A1: This typically indicates a stress singularity, often caused by a geometric feature like a sharp re-entrant corner or a point load in the model. In biomedical devices like stents, these are non-physical artifacts of the idealized CAD geometry.

- Protocol for Resolution:

- Identify: Locate the node(s) with ever-increasing stress. This is your singularity.

- Modify Geometry: Apply a small, realistic fillet (e.g., 0.01 mm) to sharp internal corners in your CAD software. Even a microscopic fillet removes the singularity.

- Re-mesh: Regenerate the mesh, ensuring element quality metrics (Jacobian, Skewness) are within acceptable limits.

- Re-run Study: Perform a new mesh convergence study. Stresses should now converge to a stable value.

- Key Check: Ensure your material model (e.g., Nitinol superelasticity) and boundary conditions (realistic vessel contact) are correctly applied, as these can also lead to erroneous results.

Q2: Our CFD simulation of blood flow through a ventricular assist device (VAD) predicts unrealistically high hemolysis indices. How do we validate and improve the model? A2: High predicted hemolysis often stems from inaccuracies in turbulence modeling or mesh resolution in high-shear regions.

- Validation & Improvement Protocol:

- Turbulence Model Selection: For transitional flows in VADs, use a Scale-Resolving Simulation (SRS) model like SAS (Scale-Adaptive Simulation) or a well-validated RANS model (e.g., k-ω SST) with curvature correction.

- Near-Wall Resolution: Ensure your mesh meets the requirements for your chosen turbulence model (e.g., y+ ≈ 1 for wall-resolved LES/SAS).

- Hemolysis Model Calibration: Use the power-law model (e.g., Giersiepa model) and calibrate constants (

α,β,C) against published in-vitro data for similar devices. - Quantitative Validation: Compare your simulation's Pressure Head (mmHg) vs. Flow Rate (L/min) and Hydraulic Efficiency (%) curves against experimental bench data.

Table 1: Quantitative Validation Metrics for a CFD VAD Model

| Performance Metric | Simulation Result | Experimental Benchmark | Acceptable Error |

|---|---|---|---|

| Pressure Head @ 5 L/min | 102 mmHg | 105 mmHg | ±5% |

| Hydraulic Efficiency @ Peak | 68% | 65% | ±5% |

| Normalized Hemolysis Index | 0.08 g/100L | 0.10 g/100L | ±0.03 g/100L |

Q3: When simulating drug release from a biodegradable polymer scaffold using CFD, how do we correctly model the coupled phenomena of fluid flow, diffusion, and surface erosion? A3: This requires a multiphysics approach. The core issue is ensuring the mass transfer boundaries are correctly linked.

- Multiphysics Coupling Protocol:

- Domains: Define two domains: the flowing fluid (blood/plasma) and the solid polymer scaffold.

- Physics Interface: Use a "Transport of Diluted Species" interface for drug diffusion in both domains, with different diffusion coefficients (

D_fluid,D_polymer). - Boundary Condition: At the fluid-solid interface, apply a "flux" condition that accounts for convective mass transfer (from fluid flow) and the erosion-driven source term.

- Erosion Kinetics: Implement a surface reaction or a moving mesh boundary where the erosion rate (

dm/dt, mm/s) is defined by a kinetic law (e.g.,rate = k * C_H2O^nfor hydrolysis). - Solve: Use a time-dependent study with a direct or segregated solver, monitoring mass conservation.

Workflow for Coupled Drug Release Simulation

Q4: What are common pitfalls in setting up FEA contact between a prosthetic heart valve and native tissue, and how can we ensure numerical stability? A4: The main pitfalls are unrealistic penetration and chattering due to sudden changes in contact status.

- Stable Contact Methodology:

- Contact Formulation: Use a "Augmented Lagrange" or "Penalty" formulation instead of "Pure Penalty" for better control over penetration.

- Normal Stiffness: Start with an automatically calculated stiffness factor, then increase it incrementally until penetration is minimal (<1% of element size) without causing divergence.

- Surface Treatment: For tissue, define the valve surface as the "contact" and the tissue as the "target." Apply a "flexible-flexible" contact if both bodies are deformable.

- Stabilization: Enable "automatic bisectioning" in the solver and apply a small damping factor (e.g., 0.1% of stiffness) to absorb high-frequency oscillations.

The Scientist's Toolkit: Research Reagent Solutions forIn-SilicoValidation

Table 2: Essential Digital Materials & Software Tools

| Tool/Reagent | Function in Biomedical Device Testing | Example Vendor/Platform |

|---|---|---|

| Ansys LS-DYNA | Explicit FEA for high-strain rate events (e.g., stent deployment, impact). | Ansys Inc. |

| Simulia Abaqus | Advanced nonlinear FEA for soft tissue and polymer material models. | Dassault Systèmes |

| Ansys Fluent / CFX | High-fidelity CFD for hemodynamics, shear stress, and mass transfer. | Ansys Inc. |

| COMSOL Multiphysics | Integrated platform for coupled phenomena (fluid-structure interaction, electrochemistry). | COMSOL Group |

| OpenFOAM | Open-source CFD for customizable solvers (e.g., non-Newtonian blood models). | The OpenFOAM Foundation |

| Materialise Mimics | Converts medical imaging (CT/MRI) to 3D CAD for patient-specific modeling. | Materialise NV |

| ISO 5840-3:2021 | Digital standard providing framework for in-silico validation of cardiovascular devices. | International Organization for Standardization |

| ASME V&V 40 | Risk-informed framework for assessing credibility of computational models. | The American Society of Mechanical Engineers |

Model Credibility Assessment Workflow

Technical Support Center

Welcome to the technical support center for in-vivo testing within biomedical engineering device validation. This guide addresses common experimental challenges, providing troubleshooting and detailed protocols to ensure robust and ethically compliant research.

FAQs & Troubleshooting Guides

Q1: Our surgically implanted biosensor in a murine model shows rapid signal degradation (within 24h) post-procedure. What are the potential causes? A: This is often a foreign body response (FBR) or surgical complication.

- Troubleshooting Steps:

- Check Sterility: Review aseptic technique logs. Perform microbial culture on explanted device. Signal loss with concurrent animal morbidity suggests infection.

- Histopathology: Sacrifice animal, explant device with surrounding tissue, and perform H&E staining. A dense neutrophil infiltrate indicates infection; a developing fibrotic capsule (macrophages/fibroblasts) indicates FBR.

- Device Function Test: In vitro test in physiological solution post-explant to rule out primary device failure.

- Surgical Review: Ensure minimal tissue trauma, appropriate biocompatible sutures, and no excessive tension around the implant site.

- Preventive Protocol: Apply a sustained-release anti-inflammatory drug coating (e.g., dexamethasone) to the device. Optimize surgical skill via practice on cadavers.

Q2: During a catheterization protocol in a porcine model, we encounter persistent vascular spasm, obstructing device delivery. How can we mitigate this? A: Vascular spasm is common in large animal models due to vessel manipulation.

- Immediate Action: Topical application of warm saline and 2% lidocaine or papaverine to the exposed vessel. Allow 2-3 minutes for relaxation.

- Systemic Pre-Medication: Consider pre-operative administration of a calcium channel blocker (e.g., verapamil, 0.1 mg/kg IV) if physiologically permissible for the study.

- Technical Adjustment: Ensure all guidewires and catheters are flushed with heparinized saline. Use gentle, minimal traction on vessel loops. Increase magnification for more precise vessel handling.

Q3: Our IACUC protocol was returned with stipulations regarding endpoint criteria for a heart failure device study. How do we define robust, objective humane endpoints? A: Ethical oversight requires clear, measurable endpoints beyond mortality.

- Solution: Implement a scoring sheet with quantitative thresholds. The protocol must define the specific score or combination of findings that trigger immediate intervention or euthanasia.

Table 1: Example Humane Endpoint Scoring Sheet for Rodent Heart Failure Study

| Clinical Parameter | Score 0 (Normal) | Score 1 (Mild) | Score 2 (Moderate) | Score 3 (Severe) |

|---|---|---|---|---|

| Body Weight Loss | <5% | 5-10% | 10-15% | >15% |

| Activity/Responsiveness | Normal | Mildly lethargic | Reluctant to move | Unresponsive to stimulus |

| Coat Condition | Normal | Mild piloerection | Severe piloerection, ungroomed | - |

| Respiratory Effort | Normal | Mild dyspnea | Obvious labored breathing | Severe dyspnea, cyanosis |

| Food/Water Intake | Normal | Reduced (<50% normal) | Minimal intake | No intake |

| Pre-defined Intervention Trigger: A total score ≥ 8, OR a score of 3 in any single parameter, mandates euthanasia. |

Q4: We observe high inter-animal variability in pharmacokinetic data for a drug-eluting stent tested in a rabbit iliac model. How can we improve consistency? A: Variability often stems from surgical technique, animal physiology, and post-op management.

- Standardization Protocol:

- Pre-Op: Acclimate animals for 7 days. Standardize diet, weight range (e.g., 3.0-3.5 kg), and pre-anesthetic fasting period.

- Anesthesia: Use precisely calculated, weight-based doses delivered via constant-rate infusion pump, not bolus injections. Monitor vital signs (temp, SpO2, ECG) throughout.

- Surgery: A single, highly trained surgeon should perform all procedures. Standardize vessel segment, degree of injury induced (e.g., balloon inflation pressure and duration), and stent deployment pressure.

- Post-Op: Administer analgesics (buprenorphine SR) and antibiotics (enrofloxacin) on a fixed schedule to all animals. Use controlled housing.

Experimental Protocol: Murine Model of Hindlimb Ischemia for Angiogenesis Therapeutic Device Validation

Objective: To surgically induce unilateral hindlimb ischemia in a mouse, enabling the evaluation of a pro-angiogenic device or drug delivery system.

Materials: See "The Scientist's Toolkit" below.

Detailed Methodology:

- Anesthesia & Preparation: Induce anesthesia with 3% isoflurane in oxygen, maintain at 1.5-2%. Apply ophthalmic ointment. Depilate the left hindlimb and abdomen. Position mouse supine on a heating pad. Cleanse surgical site with alternating betadine and 70% ethanol scrubs (3x each).

- Surgical Exposure: Using sterile micro-dissection tools, make a 1cm skin incision over the proximal medial thigh. Gently separate the gracilis muscles to expose the femoral neurovascular bundle.

- Vessel Dissection: Under high magnification (stereomicroscope), carefully separate the femoral artery and vein from the accompanying nerve using fine forceps.

- Ligation & Excision: Ligate the femoral artery proximal to the superficial epigastric branch and distal to the bifurcation of the saphenous and popliteal arteries using 7-0 silk sutures. Transect and excise the entire segment of artery between the ligatures. Ensure the vein and nerve remain intact.

- Closure: Irrigate the area with sterile saline. Approximate the muscle layer with a single 6-0 absorbable suture. Close the skin with tissue adhesive or wound clips.

- Post-Operative Care: Administer sustained-release buprenorphine (1.0 mg/kg SQ) pre-emptively. Place mouse in a clean, warm cage with hydrogel diet supplement. Monitor daily for signs of pain, distress, or infection for 7 days.

- Perfusion Assessment: At predetermined endpoints (e.g., days 0, 7, 14), quantify limb perfusion using Laser Doppler Perfusion Imaging (LDPI). Calculate the ischemic/normal limb perfusion ratio.

Visualizations

Diagram Title: In-Vivo Testing Workflow with Ethical Oversight

Diagram Title: Foreign Body Response to Implanted Device

The Scientist's Toolkit: Key Reagent Solutions for In-Vivo Testing

Table 2: Essential Materials for Surgical Ischemia Model

| Item | Function & Rationale |

|---|---|

| Isoflurane Vaporizer | Precisely delivers inhalant anesthetic for safe, controllable surgical-plane anesthesia with rapid recovery. |

| Sterile Micro-Dissection Kit | Fine forceps, scissors, and needle holders for delicate vascular dissection, minimizing tissue trauma. |

| 7-0 or 8-0 Non-Absorbable Suture (e.g., Silk) | Provides secure, non-slipping ligation of small-diameter vessels like the murine femoral artery. |

| Laser Doppler Perfusion Imager (LDPI) | Quantifies real-time blood flow non-invasively, enabling longitudinal assessment of ischemic severity and recovery. |

| Sustained-Release Buprenorphine | Provides 72h of analgesia post-op, ensuring animal welfare without need for stressful daily injections. |

| Antibiotic Ointment (e.g., Bacitracin) | Applied topically at incision site to provide a barrier against common skin pathogens. |

| Heated Surgical Platform | Maintains core body temperature during anesthesia, preventing hypothermia-induced complications. |

| Sterile Saline Irrigation | Used to keep exposed tissues moist during surgery, preventing desiccation and cell death. |

Accelerated Life Testing (ALT) and Shelf-Life Studies for Durability Assessment

Technical Support Center: Troubleshooting & FAQs

Q1: During an ALT for a polymer-based drug delivery implant, we observe premature failure modes not seen in real-time aging. Are the test conditions invalidating our study?

A1: Not necessarily. ALT accelerates relevant degradation mechanisms. Premature failure can indicate an over-stress condition or an incorrect acceleration model. First, verify your acceleration factor (AF) using the Arrhenius model for thermal aging: k = A exp(-Ea/(RT)), where Ea is activation energy (typical for hydrolysis: 50-95 kJ/mol). Ensure your test temperature does not exceed the polymer's glass transition temperature (Tg), as this alters the fundamental degradation pathway. Cross-validate by comparing chemical degradation products (e.g., via HPLC) from ALT samples with those from real-time aged samples at a lower stress level.

Q2: How do we determine the appropriate sample size for a shelf-life study of a monoclonal antibody in prefilled syringes?

A2: Sample size depends on desired confidence, variability, and shelf-life target. For a degradation kinetics study, use ICH Q1E guidance. A common approach for a zero-order degradation model:

n ≥ [ (Z_α + Z_β) * σ / δ ]^2

Where:

n= sample size per time pointZ_α= Z-value for significance level (1.96 for 95% confidence)Z_β= Z-value for power (0.84 for 80% power)σ= estimated standard deviation of potency assayδ= acceptable loss in potency at end of shelf-life. For a typical study with 3 batches, 7 time points (0, 3, 6, 9, 12, 18, 24 months), and triplicate analysis, 63 samples per batch are used.

Q3: Our accelerated stability data for a cardiovascular stent coating shows nonlinear degradation. Can we still predict shelf-life? A3: Yes, but linear extrapolation is invalid. You must identify the correct kinetic model (e.g., first-order, autocatalytic). Fit data to multiple models (zero-order, first-order, Weibull) and use the Akaike Information Criterion (AIC) to select the best fit. The shelf-life is then predicted by solving the nonlinear equation for the time at which the critical quality attribute (e.g., drug elution rate) reaches its lower specification limit. Always include a "failure time" distribution analysis (using Weibull or Lognormal plots) for mechanical integrity data.

Q4: In a humidity-controlled ALT for a diagnostic test strip, how do we calculate the humidity acceleration factor?

A4: The Peck model is standard: AF_humidity = (RH_test / RH_use)^n. The exponent n is typically between 2 and 3 for polymeric materials. For precise calculation, conduct tests at two elevated humidity levels (e.g., 65% RH and 75% RH at constant temperature) to solve for n. The combined temperature-humidity AF is: AF_total = AF_temp (Arrhenius) * AF_humidity (Peck).

Q5: Our real-time shelf-life data and ALT predictions have a >15% discrepancy. Which data should we trust for regulatory submission? A5: Real-time data always takes precedence. The discrepancy indicates your ALT model requires calibration. Investigate: 1) Mechanistic shift: Did high stress induce a new chemical pathway (e.g., oxidation vs. hydrolysis)? Perform FTIR or GC-MS. 2) Incorrect Ea: Use real-time data from at least 6 months to recalculate a more accurate Ea. 3) Package interaction: ALT may accelerate moisture ingress differently than real time. Consider using the worst-case real-time data point to set a conservative initial shelf-life, with a commitment to ongoing stability studies.

Key Experimental Protocols

Protocol 1: Determining Activation Energy (Ea) for a Biomedical Polymer

- Sample Preparation: Prepare identical samples (n=15 per group) of the device/material.

- Stress Conditions: Place samples in controlled environmental chambers at three elevated temperatures (e.g., 50°C, 60°C, 70°C) ±2°C, keeping all other factors (humidity, pH) constant at use-level conditions.

- Sampling Intervals: Remove n=3 samples from each chamber at five time intervals (e.g., 1, 2, 4, 8, 12 weeks).

- Measure CQA: Measure the primary Critical Quality Attribute (e.g., molecular weight via GPC, tensile strength).

- Data Fitting: For each temperature, plot the degradation metric vs. time. Determine the degradation rate constant (

k) at each temperature. - Arrhenius Plot: Plot

ln(k)against1/T(where T is in Kelvin). Perform linear regression. The slope is equal to-Ea/R. Calculate Ea.

Protocol 2: Zero-Time Recovery for Shelf-Life Study

- Purpose: Establish the baseline (time-zero) properties after product sterilization and packaging.

- Procedure:

- Three independent product batches.

- Within 24 hours of final package sealing, place samples into the long-term stability chamber (e.g., 25°C/60%RH).

- Simultaneously, test a separate set of samples from the same batches immediately for all release and stability-indicating attributes (assay, impurities, particulates, functionality).

- This immediate testing data is the official "time-zero" point for the stability study.

Table 1: Typical Activation Energies for Common Degradation Pathways

| Degradation Mechanism | Typical Materials/Products | Activation Energy (Ea) Range (kJ/mol) | Common ALT Stressors |

|---|---|---|---|

| Hydrolysis | Poly(lactic-co-glycolic acid) (PLGA), Peptides | 50 - 95 | Temperature, Humidity |

| Oxidation | Polyethylene, Proteins, Lipids | 80 - 120 | Temperature, Oxygen Pressure |

| Physical Aging (Relaxation) | Amorphous Polymers, Glassy Systems | 200 - 400 | Temperature |

| Denaturation/Aggregation | Monoclonal Antibodies, Enzymes | 200 - 500 | Temperature, pH, Shear |

Table 2: Sample Size Guideline for Shelf-Life Estimation (Based on ICH Q1A(R2))

| Study Phase | Minimum Batches | Minimum Time Points (Long-Term) | Testing Frequency (Long-Term) | Storage Conditions |

|---|---|---|---|---|

| Registration (New Drug) | 3 primary stability | 0, 3, 6, 9, 12, 18, 24 months; annually thereafter | Every 3 months year 1, 6 months year 2, annually after | 25°C ± 2°C / 60% ± 5% RH |

| Accelerated | 3 | 0, 3, 6 months | 3 and 6 months | 40°C ± 2°C / 75% ± 5% RH |

| Intermediate* | 3 | 0, 6, 9, 12 months | 6, 9, 12 months | 30°C ± 2°C / 65% ± 5% RH |

*Required if significant change occurs at accelerated condition.

Diagrams

Title: ALT and Shelf-Life Prediction Workflow

Title: Common Degradation Pathways in Biomedical Devices

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for ALT & Shelf-Life Studies

| Item | Function | Example/Supplier Note |

|---|---|---|

| Environmental Chambers | Precisely control temperature (±0.5°C) and relative humidity (±2% RH) for long-term stability testing. | Weiss Technik, Binder, ThermoFisher. |

| Hydrolytic Degradation Buffers | Maintain specific pH to simulate physiological conditions or accelerate hydrolysis. | Phosphate-buffered saline (PBS, pH 7.4), acetate buffer (pH 5.0). |

| Oxygen Scavengers/Antioxidants | Control oxidative stress levels; used to validate oxidation pathways or as stabilizing excipients. | Ascorbic acid, α-Tocopherol. |

| Stability-Indicating Assay Kits | Quantify specific degradation products (e.g., protein aggregates, free acid from ester hydrolysis). | Size-exclusion HPLC kits, ELISA for protein aggregates. |

| Data Loggers | Monitor and record temperature/humidity inside chambers or product packaging continuously. | Dickson, Omega Engineering. |

| Mechanical Testers | Quantify degradation of physical properties (tensile strength, modulus, burst pressure). | Instron, MTS systems. |

| Reference Standard Materials | Well-characterized materials with known stability profile for method calibration and validation. | NIST traceable standards (e.g., polymer molecular weight standards). |

| Barrier Packaging Materials | For studying package-device interactions (e.g., moisture ingress, leachable extraction). | Tyvek, various medical-grade polymer films and foils. |

Usability Engineering and Human Factors Testing (IEC 62366)

Welcome to the Technical Support Center. This resource provides targeted guidance for researchers, scientists, and drug development professionals integrating Usability Engineering and Human Factors Testing (per IEC 62366) into biomedical device testing and validation methodologies.

FAQs & Troubleshooting Guides

Q1: During a formative usability test, multiple participants misinterpret a critical alarm signal on our infusion pump prototype. What immediate steps should we take, and how does this impact our validation timeline? A: This is a critical use-related hazard. Immediately:

- Pause testing and document the issue as a potential Use Error.

- Conduct a root cause analysis: Is it the auditory tone, visual alert, placement, or lack of user training?

- Implement a design change to mitigate the risk (e.g., modify alarm sound, add a flashing red LED).

- Re-test the modified interface with a new participant subset to verify effectiveness. This will impact your timeline, as each design iteration requires validation. Document this process thoroughly as evidence of your iterative design process for regulatory submission.

Q2: How do we determine the appropriate number of participants for a summative usability test to satisfy IEC 62366-1 requirements? A: IEC 62366-1 does not prescribe a specific number. The sample size must be statistically justified and representative of the user population. Current industry practice, supported by human factors research, is summarized below:

Table 1: Common Sample Size Justifications for Summative Usability Testing

| Justification Method | Typical Sample Size per User Group | Rationale & Application |

|---|---|---|

| Saturation of Use Errors | 15-25 participants | Testing continues until no new use errors or problems are discovered. Requires iterative analysis during recruitment. |

| Statistical Confidence | 22-27 participants | Provides ~90% probability of observing a problem that occurs with a 10% probability in the user population. |

| Benchmarking | 10-12 participants | Used when comparing a new interface to a well-understood legacy device. Must be justified with prior data. |

Q3: Our human factors validation protocol includes a "simulated use" study in a lab. Will regulatory bodies accept this, or is "actual use" in a clinical setting required? A: For most devices, simulated use testing in a controlled environment is acceptable and standard. The key is fidelity. The simulation must replicate the critical tasks, use environment stressors (e.g., noise, distractions), and device interface accurately. Actual use studies are typically reserved for post-market surveillance. Document your rationale for the simulation's fidelity in your protocol.

Q4: How should we handle the discovery of a new use error during the final summative validation test? A: Follow your pre-defined Risk Management Process (ISO 14971):

- Analyze Severity: Determine the potential harm severity.

- Update Risk File: Document the new hazardous situation and estimate risk.

- Mitigate: If risk is unacceptable, a design change is mandatory, and summative testing must be repeated. If the risk is acceptable as low (ALARP), you may proceed but must document the residual risk and justify its acceptability in your Usability Engineering File.

Experimental Protocol: Formative Usability Evaluation

Objective: To identify and rectify use-related problems and use errors early in the device development process.

Methodology:

- Prepare: Define test objectives and critical tasks. Recruit 5-8 representative users per distinct user profile (e.g., nurse, patient).

- Set Up: Use a high-fidelity prototype in a simulated environment (e.g., lab mock-up of a hospital room).

- Conduct Session: Facilitator gives a pre-defined scenario. Participant performs tasks using the "think-aloud" protocol. Data is collected via video, audio, and observer notes.

- Analyze: Transcribe and code sessions. Categorize all observed difficulties, near-misses, and errors. Map each to a potential use-related hazard.

- Iterate: Prioritize issues based on risk and implement design modifications. Re-test in subsequent formative rounds.

Diagram: IEC 62366 Usability Engineering Process

Diagram Title: IEC 62366 Usability Engineering Process Flow

The Scientist's Toolkit: Key Research Reagents & Materials

Table 2: Essential Materials for Human Factors Testing of Biomedical Devices

| Item / Solution | Function in Usability Testing |

|---|---|

| High-Fidelity Prototype | Interactive model of the device used for testing; can be physical or software-based. Must replicate the final user interface. |

| Simulated Use Environment | Controlled lab space configured to mimic key aspects of the actual use environment (e.g., hospital room, home setting) to provide contextual cues. |

| Participant Recruitment Screener | Document to ensure test participants accurately represent the intended users in terms of profession, experience, demographics, and abilities. |

| Test Protocol & Scenario Script | Standardized document outlining test procedures, facilitator instructions, and the clinical scenarios given to participants to ensure consistent data collection. |

| Data Recording System | Audio-visual equipment (cameras, microphones) and software to capture participant interactions, facial expressions, and verbal feedback for analysis. |